Last Updated on 09 May 2026

Recruitment Fraud Detection: How to Keep Your ATS Clean Without Black Box AI

Share in

Introduction

The most dangerous problem in recruitment technology is not always a missing feature.

Sometimes it is bad data entering a trusted system.

An ATS is only useful if recruiters trust what is inside it. When fake applicants, AI generated resumes, duplicate identities, bots, and auto apply submissions enter the system, the ATS becomes harder to use. Recruiters spend more time reviewing noise. Hiring managers lose confidence in the pipeline. Product teams add more screening steps. Candidates face more friction.

The obvious response is to add more AI screening. But that can create a new problem. If the system starts ranking, rejecting, or judging candidates without clear explanation, hiring teams inherit compliance risk and candidate trust risk.

The better path is fraud detection with explainable signals.

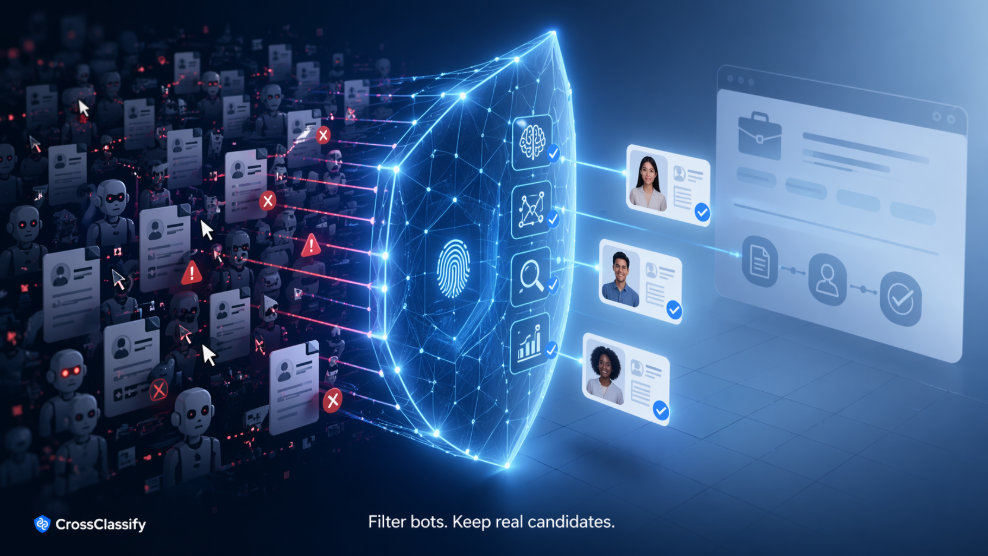

CrossClassify helps recruitment platforms and hiring teams protect ATS hygiene by flagging suspicious activity, detecting fake applicants, identifying automation patterns, and supporting human review.

ATS hygiene is a trust problem

ATS hygiene means the candidate database is clean enough to support good hiring operations.

It does not mean every candidate is perfect. It means recruiters can trust that application records are not flooded with obvious abuse, duplicate fake identities, scripted submissions, or suspicious account clusters.

Poor ATS hygiene creates hidden costs. Recruiters review the wrong applications. Good candidates wait longer. Hiring managers receive weaker shortlists. Platform teams struggle to separate real growth from spam volume.

CrossClassify’s recruitment fraud solution helps protect this trust layer by adding fraud context around accounts, applications, and sessions. The goal is not to make hiring decisions. The goal is to help humans understand which submissions deserve closer review.

Why black box AI is risky in recruitment

Recruitment is sensitive because applicants are people, not transactions.

A black box model that ranks candidates or decides who moves forward can create legal, ethical, and trust concerns. Even when the intention is efficiency, the result can feel opaque to candidates and risky to employers.

Fraud detection should be different. It should explain suspicious activity without judging candidate merit.

For example, a system can say that one applicant account shares a device fingerprint with many other accounts. It can say that a session used a suspicious browser environment. It can say that application velocity is unusual. These are fraud and abuse signals. They are not statements about talent, skill, or job fit.

CrossClassify keeps this separation clear. It supports human review by explaining suspicious patterns, while recruiters and hiring teams remain responsible for hiring decisions.

The signal families that keep ATS data clean

Clean ATS data requires more than resume parsing.

Behavioral signals show how the applicant interacts with the platform. Device signals show whether many identities are connected to the same environment. Browser signals show whether the session looks manipulated. Network signals show proxy use, region mismatch, or unusual routing. Velocity signals show abnormal application volume. Graph signals show relationships across accounts, jobs, sessions, and devices.

CrossClassify’s behavioral biometrics solution brings these signals together so suspicious patterns can be detected passively. This helps recruitment teams reduce noise without putting every applicant through more friction.

Where recruitment fraud detection fits in the ATS workflow

The best place to detect recruitment fraud is before it becomes recruiter workload.

At candidate signup, CrossClassify can detect suspicious account creation patterns. At application submission, it can identify automation, device reuse, proxy mismatch, and unusual velocity. During repeat activity, it can connect related accounts through graph analysis. In review queues, it can show reason codes to recruiters.

This gives ATS providers and recruitment platforms a practical integration path.

First, add signal collection to candidate account creation. Second, score application submissions. Third, display explainable fraud indicators inside the recruiter workflow. Fourth, measure impact on review workload, fake applications, and recruiter trust.

CrossClassify’s bot attack protection is relevant here because many recruitment abuse patterns are automation problems disguised as applicant volume.

Human review is the product advantage

The strongest product message is not “let AI screen candidates.”

The stronger message is “give recruiters better fraud context.”

Human review solves two problems at once. It keeps the organization away from risky automated rejection logic. It also gives recruiters a practical way to focus attention on suspicious submissions.

A candidate can still be reviewed. A recruiter can still override a signal. A platform can still audit why something was flagged. This is exactly the kind of explainability recruitment teams need.

CrossClassify’s homepage frames the platform around real time fraud detection for apps. In recruitment, that same real time approach becomes ATS protection, candidate account trust, and application abuse detection.

Conclusion

Recruitment fraud detection should not become another black box in the hiring stack.

The safer and more useful approach is to protect ATS data quality with explainable fraud signals. CrossClassify helps recruitment platforms, staffing firms, ATS providers, and hiring teams detect suspicious activity, support human review, and keep applicant pipelines cleaner without deciding who gets hired.

Explore CrossClassify today

Detect and prevent fraud in real time

Protect your accounts with AI-driven security

Try CrossClassify for FREE—3 months

Share in

Related articles

Frequently asked questions

Let's Get Started

Discover how to secure your app against fraud using CrossClassify

No credit card required