Last Updated on 04 May 2026

How Glassdoor Fake Review Detection Can Protect Employer Trust

Share in

Introduction

People search Glassdoor because they want a clearer view of a workplace before they apply, interview, or accept an offer. They want to know whether employees feel respected, whether managers communicate clearly, whether salaries are fair, and whether the public employer brand matches the private employee experience.

That trust depends on one simple belief. The review came from someone with a real connection to the company.

Fake Glassdoor reviews damage that belief. A fake positive review can make a weak employer look stronger than it is. A fake negative review can damage a company unfairly. A coordinated review campaign can distort scores, confuse candidates, and force moderation teams into endless manual investigation.

This is why searches such as glassdoor fake reviews, fake reviews on glassdoor, glassdoor reviews fake, how to tell if Glassdoor reviews are fake, delete fake Glassdoor reviews, remove fake Glassdoor reviews, and erase fake Glassdoor reviews service exist. The search behavior itself shows that employers, candidates, and review readers are worried about review authenticity.

Glassdoor publicly says it uses technology, algorithms, and human moderation to validate reviews and detect possible fraud. It also says every review goes through a moderation process before it is approved for publication. That confirms the platform already treats review integrity as a serious trust layer, not as a minor content quality issue.

For recruitment platforms, the next question is not whether fake reviews should be removed. The better question is how to detect review manipulation before it shapes user trust, while still protecting honest employee voices.

Fake review detection is not only a text problem

The first mistake many teams make is treating fake review detection as a writing analysis problem.

The text matters, but it is rarely enough.

A fake review can be polished. It can include believable details. It can mention a real department, a real manager style, a normal salary range, or a plausible complaint. AI writing tools make this even easier because a fraudster can generate many versions of a review that sound human.

The FTC fake review rule addresses reviews that misrepresent someone who does not exist, reviews from people without real experience, and reviews that distort the experience of the person giving it. The rule also gives regulators stronger power to act against knowing violations.

That legal context matters for review platforms because fake reviews are no longer just a moderation nuisance. They are a trust, compliance, and business risk.

A review may look normal in isolation. The stronger fraud signals often sit around the review.

- Was the account created recently.

- Was the review pasted instantly.

- Did the same device submit several reviews for different companies.

- Did the account arrive through a suspicious traffic source.

- Did the device environment look manipulated.

- Did a cluster of accounts submit reviews with similar timing.

- Did the rating pattern shift suddenly for one employer.

- Did the same account behavior appear across multiple employer pages.

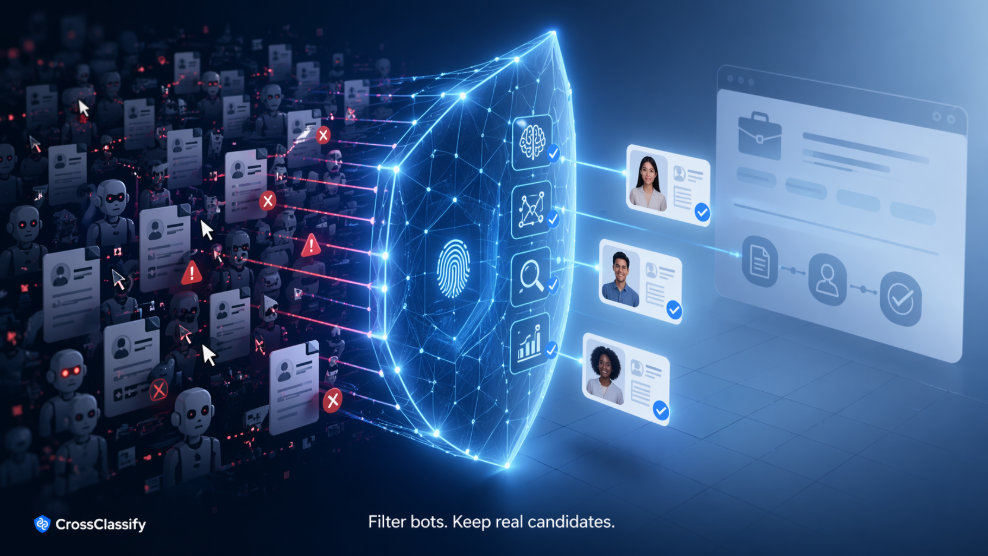

This is where CrossClassify fits naturally.

Review platforms need signals that explain risk without forcing every contributor through a hard identity check. CrossClassify’s recruitment solution shows how behavioral analysis and device fingerprinting can support authenticity across hiring workflows. That same idea can help platforms protect review contribution without making honest users feel punished.

Why fake Glassdoor reviews are difficult to handle fairly

Fake reviews create a difficult moderation challenge because the platform must protect two things at once.

First, it must protect employers from manipulation.

Second, it must protect employees from retaliation, intimidation, and unfair removal of legitimate criticism.

That balance is delicate. If a review platform removes too much content, users may believe employers are controlling the narrative. If it removes too little suspicious content, employers may believe the platform allows reputation abuse.

Glassdoor also says review content shared on the platform remains anonymous and that it enforces guidelines so users feel confident sharing their workplace experiences.

That means the detection layer must be privacy aware. It cannot simply turn every review into an identity verification event. It must separate reviewer anonymity from abuse anonymity.

Those are not the same thing.

A real employee should be able to share feedback safely. A coordinated fraud ring should not be able to create dozens of fake accounts, manipulate scores, and hide behind the same anonymity promise.

CrossClassify’s Review Integrity Intelligence module helps solve this by looking at behavioral, device, session, and graph signals around review activity. It does not need to expose the reviewer to an employer. It can score whether the activity looks authentic, suspicious, repeated, coordinated, automated, or manipulated.

The signals that matter most in fake review detection

A serious fake review detection system should look at several layers at the same time.

Account trust is the first layer. A new account is not always suspicious, but a new account that immediately posts a strong review, shares signals with other accounts, or appears during a rating spike deserves more attention.

Device trust is the second layer. A fraudster can create new emails, but often the device, browser, emulator, proxy pattern, or technical environment shows reuse. CrossClassify’s device fingerprinting solution explains how device intelligence can reveal suspicious devices and repeated abuse patterns. This matters because fake review operators often rely on many accounts that still connect back to common technical infrastructure.

Behavioral trust is the third layer. Real users navigate, pause, read, scroll, type, edit, and hesitate. Automated or farmed review activity often has different rhythm. CrossClassify’s behavioral biometrics solution focuses on how people interact with an application, which gives review platforms a passive way to detect suspicious activity without adding friction to normal users.

Graph trust is the fourth layer. The platform should not only ask whether one review is fake. It should ask whether this review belongs to a suspicious cluster. CrossClassify’s Employer Reputation Abuse Graph connects reviewer accounts, employer pages, devices, session timing, review velocity, rating changes, and behavioral patterns. That helps trust teams detect campaigns, not only isolated reviews.

Content trust is the final layer. The review text still matters. But it should support the risk decision rather than carry the entire burden.

Why remove fake Glassdoor reviews is the wrong first question

Many employers search for phrases like remove fake Glassdoor reviews, delete fake Glassdoor reviews, erase fake Glassdoor reviews, and delete fake Glassdoor reviews service. That language is understandable. If a company believes it has been targeted by fake reviews, the immediate desire is removal.

But from a platform perspective, the stronger goal is earlier detection.

Removal is reactive. Detection is preventive.

If a fake review is already published, the damage may have started. Candidates may have seen it. Screenshots may exist. Employer trust may already be affected. The moderation team now has to investigate a disputed review under pressure.

A better system scores risk before publication, or at least before suspicious clusters become visible enough to shape employer reputation.

CrossClassify can support this with real time risk scoring at review submission. A low risk review can continue normally. A medium risk review can enter deeper moderation. A high risk cluster can be escalated for investigation. A repeated fraud pattern can trigger account restrictions, device blocks, or additional checks.

The goal is not to make review contribution harder. The goal is to make manipulation harder.

How Review Integrity Intelligence works in a Glassdoor style platform

Imagine a user signs in to submit a review. The CrossClassify JavaScript SDK observes device, session, and behavior signals in the background. The user writes the review as usual.

At submission, the platform sends a risk event to CrossClassify. The event includes account age, review action, device profile, session behavior, traffic context, employer page, timing, and known trust signals. CrossClassify returns a risk score and reason codes.

Those reason codes matter.

A moderator does not only see suspicious review. They see why. The account may share a device with several recent reviewers. The browser may look manipulated. The review may be part of a sudden rating burst. The typing flow may indicate pasted mass content. The account may be linked to prior rejected reviews.

This changes moderation from guesswork to evidence based triage.

CrossClassify’s account opening protection can also help at the point where fake reviewer accounts are created. The account opening solution shows how suspicious signup behavior can be scored before abusive accounts become active. In review platforms, stopping fake accounts early reduces pressure on the review moderation layer later.

Why this matters for employers

Employers care about Glassdoor because it affects candidate perception. A profile with suspicious reviews can hurt recruiting, employer brand investment, and internal confidence.

But employers also need a fair process. They should not be able to remove real criticism simply because it is uncomfortable. A strong review integrity system protects both sides.

If an employer reports a suspicious review, the platform can evaluate signals around that review instead of relying only on the employer’s claim. Did the reviewer account behave like a real user. Was there a suspicious device link. Was the review part of a cluster. Did similar accounts appear around the same time.

This helps the platform respond with more confidence.

Why this matters for candidates

Candidates are the final trust audience. They are not just reading reviews for entertainment. They use workplace reviews to make decisions about applications, interviews, offers, relocation, and career risk.

If candidates believe Glassdoor reviews are fake, the product loses value. If they believe negative reviews are removed too easily, the product also loses value.

The ideal review integrity layer keeps real feedback visible and reduces manipulation.

That is why CrossClassify should position fake review detection as candidate trust protection, not only employer reputation protection.

Conclusion

Glassdoor fake review detection should not depend only on reviewing words on a screen. The strongest signals often come from account history, device intelligence, behavioral biometrics, review velocity, and graph relationships.

CrossClassify can help Glassdoor style recruitment platforms protect review integrity without adding friction to honest reviewers. Its Review Integrity Intelligence module gives trust teams the evidence they need to detect fake reviews, coordinated campaigns, suspicious reviewer accounts, and employer reputation abuse earlier.

The better question is not simply how to remove fake Glassdoor reviews. The better question is how to stop fake review activity before it becomes part of the trust layer candidates depend on.

Explore CrossClassify today

Detect and prevent fraud in real time

Protect your accounts with AI-driven security

Try CrossClassify for FREE—3 months

Share in

Related articles

Frequently asked questions

Let's Get Started

Discover how to secure your app against fraud using CrossClassify

No credit card required