Last Updated on 10 May 2026

AI Candidate Fraud Detection for Recruitment Platforms and ATS Systems

Share in

Introduction

AI has changed the shape of candidate fraud.

In the past, fake applications were often easier to spot. The resume looked weak. The profile looked incomplete. The message looked copied. Today, AI can make weak applications look polished, complete, and professional.

That creates a new problem for recruitment platforms and ATS systems. The content looks better, but the trust behind the content may be weaker.

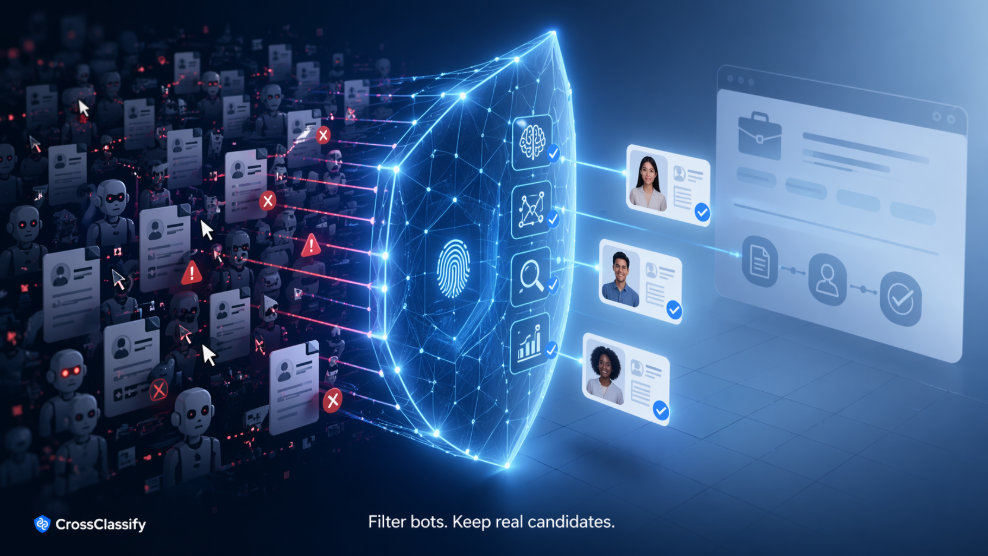

AI candidate fraud detection should not ask only whether a resume sounds good. It should ask whether the account, device, session, network, behavior, and application pattern can be trusted.

CrossClassify gives recruitment platforms and ATS systems a real time fraud signal layer for detecting fake candidates, auto apply abuse, bot activity, suspicious devices, duplicate identities, and application spam.

AI candidate fraud is broader than fake resumes

A fake resume is only one part of the problem.

AI candidate fraud can involve synthetic profiles, repeated applicant accounts, fake work histories, copied answers, deepfake interview preparation, auto apply tools, and coordinated account farms. It can also involve real people using automation in ways that distort applicant quality and recruiter workload.

This means detection has to happen across the whole candidate journey.

At signup, the platform should understand whether the account looks suspicious. During application, it should understand whether behavior looks automated. Across repeated submissions, it should understand whether many candidates are connected by the same device, browser, network, or interaction pattern.

CrossClassify's recruitment solution is built for this broader view of hiring fraud. It supports fraud signals across candidate accounts, resumes, applications, and suspicious submission behavior.

Why recruitment platforms need a trust layer

Recruitment platforms are marketplaces of trust.

Employers trust that applicants are real. Candidates trust that opportunities are legitimate. Recruiters trust that the ATS reflects real demand. Product teams trust that growth metrics are meaningful.

When AI candidate fraud enters the system, all of that becomes weaker.

A job board may see more applications, but many may be low trust. An ATS may show strong volume, but recruiters may find fewer useful candidates. A staffing firm may spend more time validating identity than advising clients.

A trust layer helps solve this. It does not replace the ATS. It protects the ATS.

CrossClassify can sit alongside existing recruitment workflows through APIs and SDKs. Its device fingerprinting solution helps platforms detect repeated devices, suspicious environments, and linked applicant behavior before fake candidates blend into the database.

The right signals for AI candidate fraud detection

AI candidate fraud detection works best when it combines multiple signal families.

Content signals can identify suspicious resume structure, repeated formatting, unnatural profile patterns, or inconsistencies in submitted information.

Behavioral signals can detect whether the applicant session looks human, assisted, scripted, rushed, or repeated.

Device signals can detect whether many identities are linked to the same device or browser.

Network signals can detect proxies, hosting infrastructure, impossible geography, or mismatched location claims.

Graph signals can connect accounts, sessions, jobs, devices, and behavior into a broader abuse pattern.

CrossClassify's behavioral biometrics solution adds passive interaction intelligence to this stack. It helps detect risky activity without forcing every legitimate candidate through extra steps.

What ATS providers can show recruiters

Recruiters do not need a mysterious score.

They need clear context.

A good AI candidate fraud detection workflow should show simple reason codes. For example, this application may need review because the account applied to many jobs unusually quickly. This candidate profile may need review because the device is linked to multiple other applicants. This session may need review because the browser environment looks manipulated.

That is useful. It gives the recruiter a reason to look closer. It does not tell the recruiter what decision to make.

CrossClassify's bot attack protection can support this by detecting automation patterns, scripted interaction, and abnormal submission behavior across recruitment workflows.

Product integration model for recruitment SaaS

The integration path can be simple.

Add the web SDK to candidate signup and application submission flows. Send relevant server side events to the CrossClassify API. Receive a fraud risk response with reason codes. Store the signal in the ATS record or review dashboard. Let recruiters and trust teams review suspicious submissions.

For ATS providers, this can become a premium trust and safety feature. For staffing firms, it can become an internal quality control layer. For job boards, it can protect employer trust. For recruitment agencies, it can reduce time wasted on fake or low trust submissions.

CrossClassify's account opening solution is relevant because many fake candidate problems start at account creation. If suspicious accounts can be detected early, the rest of the recruitment workflow becomes cleaner.

Conclusion

AI candidate fraud detection is not about banning AI from hiring.

It is about protecting trust in the candidate pipeline.

Recruitment platforms and ATS systems need signals that explain suspicious behavior, detect automation, connect repeated identities, and support human review. CrossClassify provides that trust layer through behavioral biometrics, device fingerprinting, bot detection, link analysis, and explainable fraud indicators.

Explore CrossClassify today

Detect and prevent fraud in real time

Protect your accounts with AI-driven security

Try CrossClassify for FREE—3 months

Share in

Related articles

Frequently asked questions

Let's Get Started

Discover how to secure your app against fraud using CrossClassify

No credit card required