Last Updated on 06 Apr 2026

OWASP A10:2025 Mishandling of Exceptional Conditions Prevention Guide

Share in

Introduction

OWASP Top 10 remains one of the most widely used awareness frameworks for web application security because it translates sprawling vulnerability research into a practical list that engineering, security, and product teams can act on. The 2025 release is based on updated data and industry trends, and OWASP says the release drew from testing data covering more than 2.8 million applications. That scale matters because it means the list is not just theoretical, it reflects what is actually being found in modern software. In that 2025 release, A10 Mishandling of Exceptional Conditions appears as a new category, which is a strong signal that poor error handling, fail open behavior, and unsafe reactions to abnormal states have moved from "quality issue" territory into mainstream security risk territory.

This branch matters because attackers do not only attack the happy path. They attack timeouts, malformed requests, missing parameters, race conditions, partial transaction failures, and any point where the application becomes uncertain about what to do next. OWASP's own score table for A10 reports 24 mapped CWEs, an average incidence rate of 2.95 percent, 769,581 total occurrences, and 3,416 CVEs, which is a substantial volume for a category that is newly formalized in 2025. Public fraud loss figures mapped only to A10 are still immature because the category is new, but the broader threat environment is already clear: Imperva reports that bad bots now account for 37 percent of all internet traffic and that automated traffic has risen to 51 percent of all web traffic, while Verizon says about 88 percent of breaches in its Basic Web Application Attacks pattern involved stolen credentials. That combination is exactly why exceptional condition handling can no longer be treated as a narrow coding concern, because modern attackers are using automation and credential abuse at scale to force systems into abnormal states and then monetize the weak response.

For fraud focused application owners, the security lesson is simple. A login that technically succeeds is not enough, and an error page that looks "clean" to a user is not enough either. What matters is whether the system fails safely, logs meaningfully, alerts fast enough, and keeps evaluating the trustworthiness of the session when reality diverges from design assumptions. That is the point where OWASP A10 intersects directly with account takeover protection, account opening protection, bot and abuse protection, device fingerprinting, and behavioral biometrics.

Top 10:2025 List

- A01:2025 Broken Access Control

Broken Access Control remains number one because applications still allow users and systems to reach data or functions they should not reach. OWASP says it stayed at number one in 2025, and SSRF was rolled into this category as part of that broader access control picture. - A02:2025 Security Misconfiguration

Security Misconfiguration moved up to number two because modern applications rely heavily on settings, cloud policies, defaults, services, and deployment options that can quietly widen the attack surface. OWASP reports this category grew in prevalence in the 2025 cycle, which reflects how configuration is now a major part of application behavior. - A03:2025 Software Supply Chain Failures

This category expands the older vulnerable components focus into the wider dependency, build, and distribution ecosystem. OWASP notes that it had limited testing visibility in collected data, yet still showed very high average exploit and impact scores and ranked as a top community concern. - A04:2025 Cryptographic Failures

Cryptographic Failures moved down in ranking, but not because they stopped being dangerous. OWASP states that this category still often leads directly to sensitive data exposure and system compromise. - A05:2025 Injection

Injection remains one of the most tested and most recognizable categories, covering flaws that let attackers influence interpreters, queries, templates, and commands. OWASP highlights that the category spans everything from relatively frequent lower impact issues to less frequent but very high impact flaws such as SQL injection. - A06:2025 Insecure Design

Insecure Design focuses on architectural and workflow weaknesses that survive even when code quality looks strong. OWASP says industry practice has improved since 2021 through stronger threat modeling and more attention to secure design, but the category remains central because flawed design choices still create exploitable systems. - A07:2025 Authentication Failures

Authentication Failures kept its place at number seven, with a slight name change to better reflect what the category covers. OWASP suggests the broader adoption of standardized authentication frameworks may be helping somewhat, but failures in trust establishment still remain highly relevant. - A08:2025 Software or Data Integrity Failures

This category focuses on trust boundaries and verification of software, code, and data artifacts. OWASP distinguishes it from supply chain failure by placing the emphasis lower in the stack, where integrity assumptions can still collapse even without a dramatic upstream compromise. - A09:2025 Security Logging and Alerting Failures

A09 stays at number nine and was renamed to stress that logging without alerting is not enough. OWASP explicitly says good logs with no alerting have minimal value for identifying incidents in time to act. - A10:2025 Mishandling of Exceptional Conditions

A10 is new in 2025 and captures improper error handling, logic errors, fail open behavior, and unsafe responses to abnormal conditions. OWASP introduced it because these weaknesses were too often dismissed as generic code quality issues even though they can directly produce serious security failures.

Definition and Causes of Mishandling of Exceptional Conditions

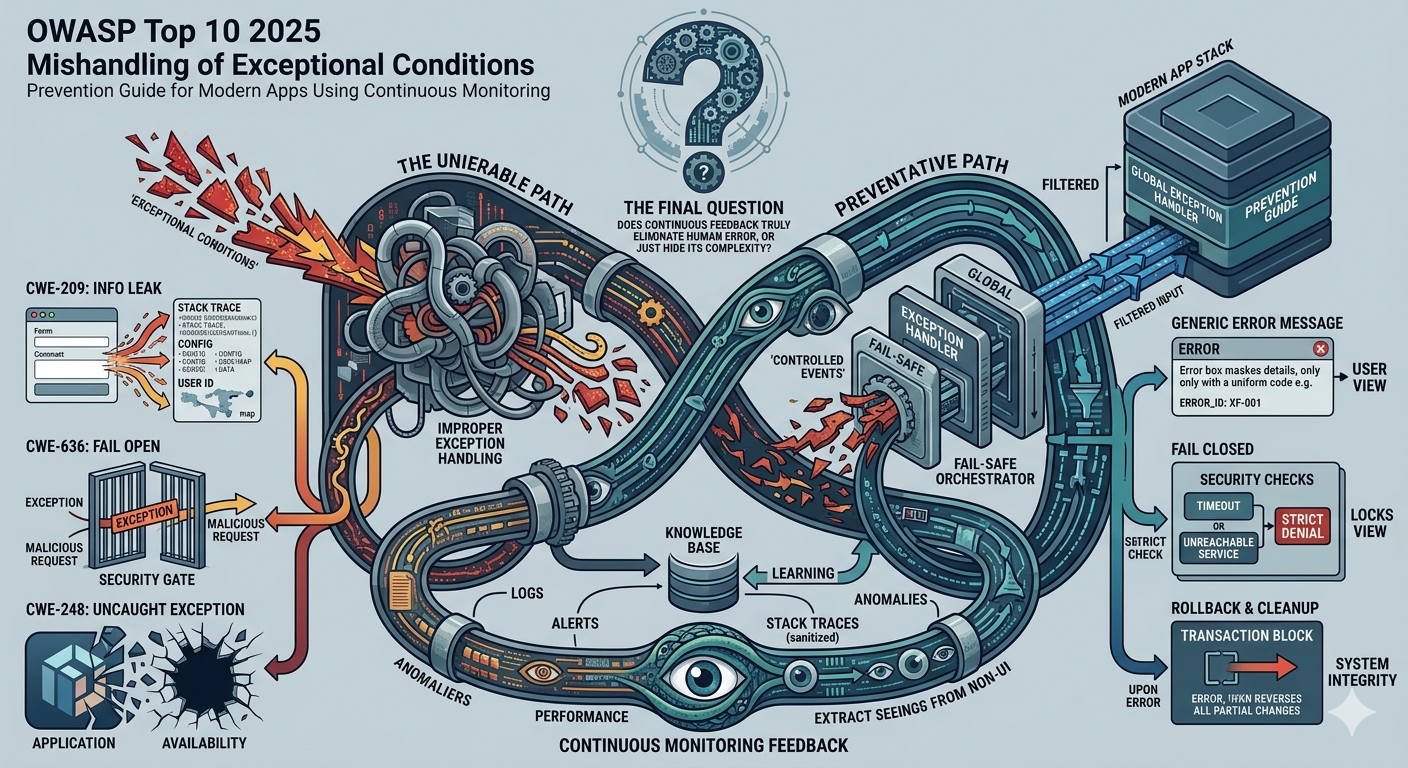

Mishandling of Exceptional Conditions is OWASP's new 2025 category for the situations where software fails to prevent, detect, or safely respond to unusual and unpredictable conditions. OWASP says the category maps 24 CWEs and explicitly calls out problems such as error messages containing sensitive information, missing parameter handling failures, null pointer dereferences, privilege handling mistakes, and not failing securely. OWASP also reports an average weighted exploit score of 7.11 and an average weighted impact score of 3.81 for the category, which shows that these are not harmless edge cases. In other words, A10 is about the security consequences of software uncertainty.

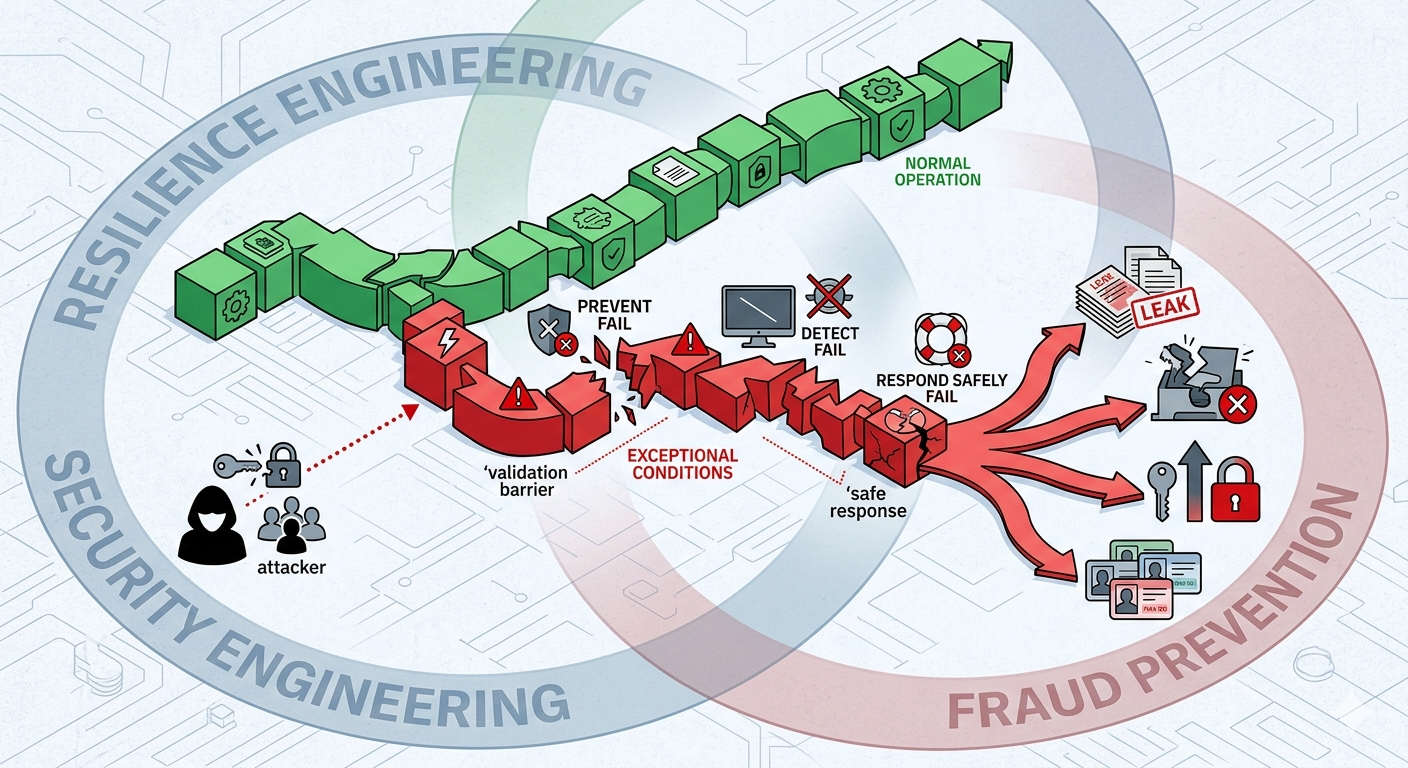

The category is broader than "bad error pages." OWASP explains that exceptional conditions can be caused by incomplete input validation, late or inconsistent error handling, unhandled exceptions, and environmental problems such as memory, privilege, or network issues. SecureFlag describes the same family of failures as systems that do not anticipate, detect, or correctly handle unexpected or faulty conditions, which leaves them vulnerable to crashes, information leaks, unauthorized actions, and data corruption. That framing matters because it makes clear that A10 sits at the intersection of security engineering, resilience engineering, and fraud prevention, not in a narrow debugging corner.

OWASP's own definition is especially useful because it breaks the failure into three parts. The application may fail to prevent the unusual situation, fail to identify it while it is happening, or fail to respond safely after it occurs. Authgear's practical summary of A10 aligns with this and explains that attackers actively provoke odd states, malformed inputs, and failure conditions precisely to see whether the system leaks information, crashes, skips controls, or defaults to allow. That is why Mishandling of Exceptional Conditions is deeply relevant to fraud types such as account takeover, account opening fraud, and bot abuse, because the attacker's objective is often not the error itself but the weak state transition that follows it.

Variations of Mishandling of Exceptional Conditions

OWASP does not publish a separate frequency table for each individual variation inside A10, so the cleanest public benchmark remains the category level score table: 24 mapped CWEs, 769,581 occurrences, 3,416 CVEs, and an average incidence rate of 2.95 percent. That means the best way to discuss variations is to combine OWASP's mapped CWE list with well documented public cases and testing guidance. This is more honest than pretending the ecosystem already has stable per symptom market statistics for a category that only became formalized in 2025.

Sensitive error disclosure and reconnaissance leakageOne common variation is exposing internal details in user visible errors, such as stack traces, SQL errors, file paths, framework versions, or raw debug output. OWASP's community page on Improper Error Handling warns that these messages reveal implementation details that should never be shown to attackers, while A10's mapped CWEs explicitly include CWE 209 and CWE 550 for sensitive information disclosure in error messages. A current public example is CVE 2025 62168, where NVD describes Squid versions prior to 7.2 as failing to redact HTTP authentication credentials in error handling, allowing information disclosure; NVD also lists the issue as CVSS 3.x base score 7.5 High. This is the purest A10 pattern, an abnormal condition becomes a reconnaissance surface and then becomes a security exposure.

Fail open authorization and privilege handling

Another dangerous variation is when a system encounters an error and defaults to allow instead of deny. OWASP explicitly maps CWE 636 Not Failing Securely, plus privilege handling weaknesses such as CWE 274 and CWE 280, into this category, and Authgear's A10 guide describes fail open behavior as one of the clearest ways attackers convert edge cases into access bypass. This variation is especially dangerous in authentication, authorization, API gateway, and transaction approval logic because the abnormal condition itself may look minor while the resulting trust decision is catastrophic. In fraud terms, this is how an error becomes unauthorized continuation of a high risk journey.

Resource exhaustion and exception storms

A10 also includes the class of problems where exceptions are caught badly, cleanup is skipped, or the system continues consuming locks, memory, file handles, or compute until availability collapses. OWASP's testing guide notes that testers should intentionally force edge cases and malformed requests because these can produce deadlocks, unhandled exceptions, or denial of service conditions, and Cisco's CVE 2021 34781 is a clear real world example: NVD says the issue resulted from improper error handling when an SSH session failed to establish, allowing a remote attacker to trigger resource exhaustion and requiring a manual reload to recover. This variation matters for fraud and abuse because attackers do not need to steal data to win if they can knock critical controls or business flows offline.

Partial transaction failure and state corruption

OWASP's own A10 page gives a financial transaction scenario that is highly relevant to fraud. It describes an attacker interrupting a multi step transaction after debit but before credit or proper transaction logging, creating state corruption when the system does not roll the whole process back and fail closed. This is not just an engineering reliability issue, because once money movement, entitlement changes, payout instructions, or destination edits happen in a partially committed state, an attacker may be able to monetize the inconsistency.

Missing parameters, unchecked returns, and inconsistent exception handling

A subtler but very common variation is the family of flaws where the application misses a required input, ignores a return value, catches exceptions too generically, or handles the same class of error differently in different services. OWASP maps CWE 234, CWE 252, CWE 390, CWE 391, CWE 394, CWE 396, and CWE 397 into A10, and SecureFlag notes that these inconsistent responses are exactly what leave systems in unsafe states without visibly "crashing." The practical risk is that an attacker only needs one weak branch in one API or one service to bypass a check, skip a cleanup, or continue execution after something important already failed.

Real Examples of Mishandling of Exceptional Conditions

NVD describes CVE 2021 34781 as a vulnerability in Cisco Firepower Threat Defense where a lack of proper error handling during failed SSH session establishment let an unauthenticated remote attacker cause a denial of service. The key lesson is not only that the device could be exhausted and taken offline, but that the path to failure was built out of abnormal session handling rather than a classic exploit chain. NVD further explains that successful exploitation could cause resource exhaustion and require the device to be manually reloaded, which shows how exceptional condition handling can directly become an availability crisis. This is a strong example of A10 because the attacker weaponized the system's reaction to failure, not a normal business feature.

CVE 2025 62168, Squid Proxy credential disclosure

NVD describes CVE 2025 62168 as a failure to redact HTTP authentication credentials in Squid error handling, which allowed information disclosure and exposed credentials a trusted client used to authenticate. The flaw is mapped to CWE 209 and CWE 550, and the description makes clear that the abnormal error path itself became the data leakage mechanism. This is the archetypal example of why "just hide the stack trace" is not enough as a defense mindset, because any generated diagnostic path, support link, or administrative error output can become a channel for secrets if the design assumes the exception path is harmless.

Transaction integrity breakdowns and unsafe continuation

OWASP's own example attack scenario for A10 describes state corruption in financial transactions when a multi step process is interrupted and the system fails to roll back the entire flow. SecureFlag echoes this risk by warning that if an application continues executing in an unsafe state, it can produce unauthorized actions and inconsistent data rather than a clean stop. This is particularly important for payment, wallet, marketplace, shipping, and admin approval workflows where one interrupted dependency call, timeout, or partial write can create a monetizable inconsistency.

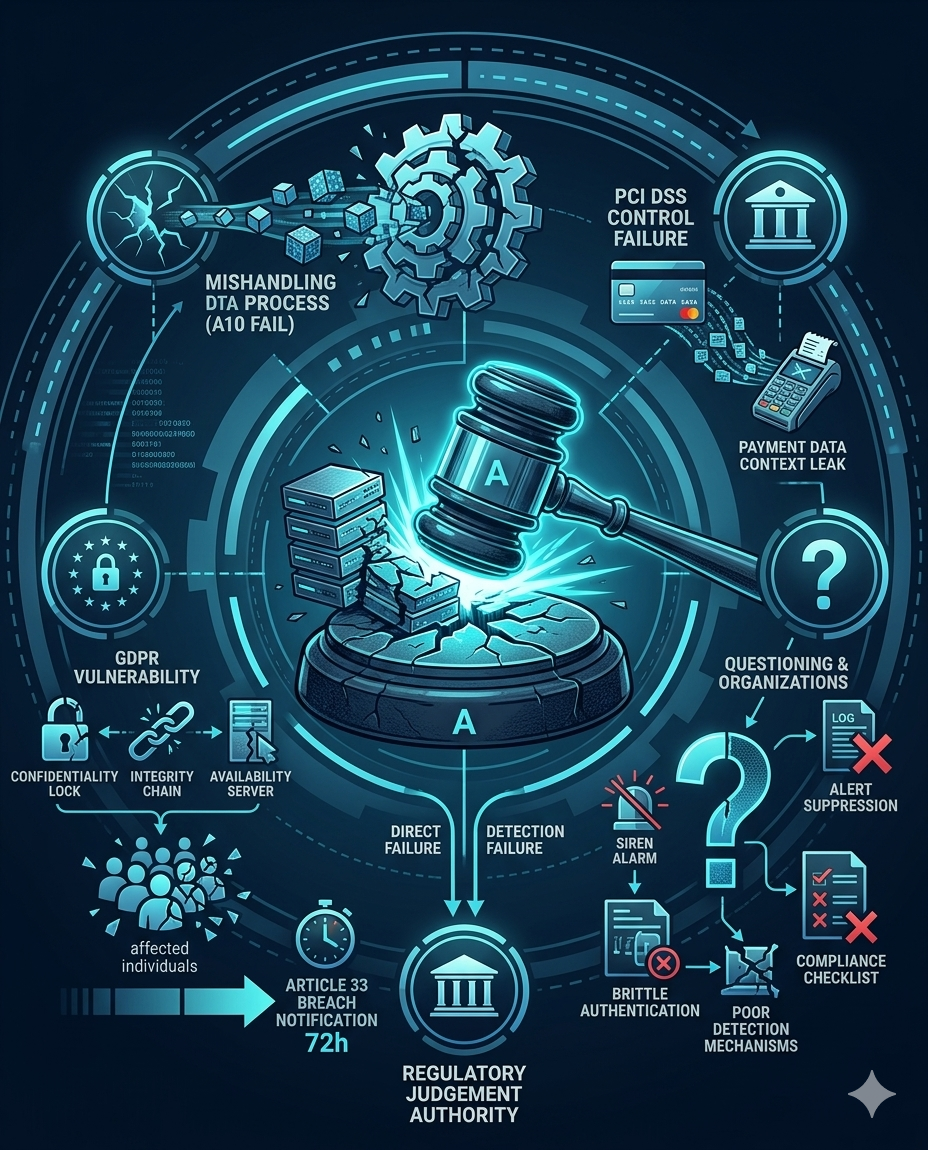

Regulatory Compliance of Mishandling of Exceptional Conditions

From a GDPR perspective, Mishandling of Exceptional Conditions becomes a compliance issue as soon as the unsafe error path affects the confidentiality, integrity, or availability of personal data. Article 32 requires controllers and processors to implement technical and organizational measures appropriate to the risk, and explicitly includes the ongoing confidentiality, integrity, availability, and resilience of processing systems as part of that duty. Article 33 adds a breach notification duty within 72 hours where required, which means a badly handled exceptional condition can create both the security failure and the reporting obligation. So while regulators usually do not label a case "OWASP A10," the legal obligation clearly covers the kinds of unsafe states, information leaks, and resilience failures that A10 is about.

Recent CNIL enforcement shows how this plays out in practice. In January 2026, CNIL fined FREE MOBILE and FREE a combined €42 million, stating that certain basic security measures were missing, the VPN authentication procedure was not sufficiently robust, and the measures deployed to detect abnormal behavior were ineffective; CNIL also criticized the company's communication to affected individuals. In the same month, CNIL fined FRANCE TRAVAIL €5 million for failing to ensure the security of job seekers' data, saying the technical and organizational measures in place were inadequate. These cases are not framed as "A10 fines," but they strongly support the compliance point that brittle security controls, weak detection, and poor handling of abnormal conditions can create direct GDPR exposure under Article 32 and breach related duties.

PCI DSS reaches the same conclusion from the payment side. PCI SSC says PCI DSS v4.0.1 is the current limited revision of the standard, and the SAQ D materials show that Requirement 6.2 requires bespoke and custom software to be developed securely with secure authentication and logging in mind, while Requirements 10.4 and 10.4.1 require audit logs to be reviewed for anomalies and suspicious activity, including the use of automated mechanisms for log review. If a checkout system leaks sensitive payment context in an error path, fails to roll back properly, or suppresses alerts around repeated exceptional conditions, the issue is no longer just "bad engineering," it becomes a payment security control failure.

Protection and Prevention Methods for Mishandling of Exceptional Conditions

OWASP's first recommendation is direct and correct: catch every possible system error as close as possible to where it occurs and do something meaningful to recover or stop safely. The people who should apply this are software engineers, API developers, and platform teams, because they are the ones defining how the application behaves when dependencies, parsing, memory, or authorization checks fail. This method is highly effective for shrinking unsafe state transitions because it prevents the application from drifting too far away from the original failure context.

2. Use a global exception handler, but do not rely on it alone

OWASP also recommends a global exception handler as a backstop for anything missed locally. This should be implemented by application architects, framework owners, and platform engineering teams because it needs to be standardized across services and aligned with logging, masking, and support workflows. The method is effective as a last line of defense because it reduces total blind spots and helps avoid raw crash behavior or full stack trace exposure.

3. Fail closed and roll back full transactions

OWASP is especially explicit that partial transaction recovery is dangerous and that systems should roll back the entire transaction and fail closed. Engineering teams, payment teams, and business workflow owners should apply this because the risk is often hidden in multi step logic, not just in infrastructure. This control is one of the most effective defenses against fraud oriented exceptional condition abuse because it prevents attackers from profiting from half finished account, payout, balance, or entitlement states.

4. Add rate limiting, resource quotas, and throttling

OWASP explicitly says that nothing in information technology should be limitless because limitless behavior leads to resilience failures, denial of service, brute force success, and extraordinary cloud bills. This control belongs to API engineers, SRE teams, infrastructure teams, and bot defense owners because it sits across edge controls, services, queues, and compute usage. It is highly effective against automated abuse and error amplification attacks because it reduces the attacker's ability to manufacture massive exceptional condition volume.

5. Apply strict input validation and safe sanitization

OWASP recommends strict input validation, with sanitization or escaping where hazardous characters must be accepted. This should be applied by application developers, API designers, and quality engineers because many exceptional conditions begin with malformed, missing, over sized, or semantically invalid inputs. The method is very effective because it stops a large share of unsafe states before deeper logic, interpreters, or dependencies ever see them.

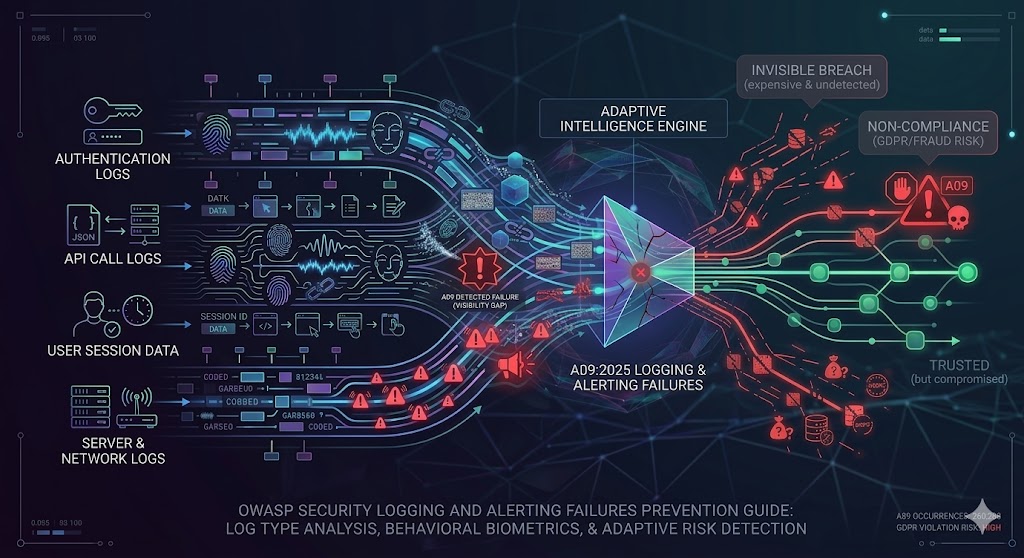

6. Centralize logging, monitoring, and alerting

OWASP's A10 guidance and A09 guidance fit together closely here. A10 says applications should log events, issue alerts where justified, and use monitoring or observability that watches for repeated errors or attack patterns, while A09 stresses that logs without alerting are of limited practical value. This control should be owned jointly by engineering, security operations, fraud operations, and observability teams because the goal is not only to record exceptional conditions but to create action from them.

7. Aggregate repeated identical errors as statistics, not just raw noise

OWASP makes a subtle but important point by suggesting that repeated identical errors above a threshold may be better represented as statistics appended to the original message rather than as endless duplicate lines. This is a control for observability teams, SIEM owners, and platform operators because it is about preserving actionable signal and avoiding monitoring blind spots caused by log floods. Its effectiveness is high in bot and abuse scenarios because attackers often weaponize repetition, and summarized repetition can reveal the campaign more clearly than millions of duplicate entries.

8. Build security requirements and threat modeling into design

OWASP recommends project security requirements plus threat modeling and secure design review in the design phase, not after deployment. Product security, architects, senior engineers, and business workflow owners should apply this because many A10 problems are really undefined behavior problems, cases where nobody decided what safe failure should look like. This method is highly effective because it reduces the number of "unknown unknowns" that reach production and forces teams to design unhappy path behavior explicitly.

9. Use code review, static analysis, stress testing, performance testing, and penetration testing together

OWASP explicitly calls for code review or static analysis, along with stress, performance, and penetration testing of the final system. This is a shared responsibility across AppSec, QA, developers, and red teams because exceptional condition bugs can appear in source logic, runtime behavior, or system interactions under load. The method is effective because no single test style catches every A10 issue: code review finds logic mistakes, dynamic tests expose unsafe outputs, and stress testing reveals the brittle states that only appear under pressure.

10. Standardize exceptional condition handling across the organization

OWASP's final point is that, where possible, the entire organization should handle exceptional conditions in the same way because this makes review and audit easier. This belongs to engineering leadership, platform owners, architecture councils, and security governance teams because consistency only becomes real when it is implemented as a shared standard, not a local preference. The method is effective for long term quality because it reduces exception logic drift across products and services.

Protection Tools for Mishandling of Exceptional Conditions

The most obvious tool family for A10 is DAST, and OWASP's Web Security Testing Guide supports that view directly. The testing guide for improper error handling tells testers to trigger errors by requesting invalid resources, breaking HTTP expectations, and fuzzing application input points so they can identify existing error output and analyze the different responses returned by the system. That makes DAST especially useful for discovering verbose errors, stack traces, unsafe status handling, and input driven exception behavior in the running application. For A10, DAST works best when it is not treated as a compliance checkbox but as a deliberate campaign to provoke the system outside the happy path.

DAST is necessary, but it is not sufficient. OWASP's own A10 text makes clear that the category also includes business logic abuse, partial transaction failure, fail open behavior, and long lived unsafe states, which means fuzzing, observability, log analytics, and transaction aware abuse testing are also important. In practice, the strongest tool stack for A10 combines DAST, negative path API testing, stress testing, centralized logging, alerting, and runtime anomaly detection. That is also why intelligent monitoring matters so much here, because many exceptional condition failures are not one request problems, they are pattern problems that only become visible across sessions, devices, retries, and repeated errors over time.

The Gap That Still Exists After You Hide the Error Page

Many teams think they have solved Mishandling of Exceptional Conditions once users stop seeing stack traces. That is necessary, but it is nowhere near enough. The deeper problem is that developers spend most of their time on the happy path, while attackers spend their time on the messy terrain of retries, timeouts, broken dependencies, repeated failed attempts, impossible sequences, and suspicious recovery patterns. OWASP A10 is really about what happens in that off road space, and whether the application continues to make safe trust decisions there.

What users should not see is stack traces, SQL queries, file paths, server versions, or internal diagnostics. What users should see is a generic, understandable message, a support reference ID, and a safe path forward such as "Please try again" or a guided retry after the system has failed closed. OWASP's improper error handling guidance and the broader A10 recommendations support this approach because they separate user communication from internal diagnostic detail. But even when you get that masking right, you still have a serious open question: who is watching the patterns behind those masked failures, and who is deciding whether they signal fraud, automation, or account compromise?

Traditional protection for A10 is still necessary and should be treated as the baseline rule engine. Those rules include catch errors locally, use a global exception handler, fail closed, roll back full transactions, validate inputs strictly, rate limit aggressive clients, centralize logging, and alert on repeated failures. These static controls are valuable because they provide deterministic safety rails, they are auditable, and they reduce obvious exposure such as raw error leakage or unlimited retry storms. The limitation is that static rules can tell you what the application should do when a condition occurs, but they often cannot tell you whether the pattern behind those conditions represents a live fraud campaign, a scripted probe, a compromised account, or a coordinated bot ring.

That gap becomes visible in production very quickly. A rate limit may block the loudest request spike, but it will not always connect the same attacker across multiple devices, identities, IPs, and sessions. A generic error page may stop information leakage to the user, but it does not tell you whether the same device has triggered the same failure twenty times across accounts and geographies. And a support reference ID helps incident handling, but only if someone is correlating that event with device continuity, session behavior, transaction intent, and downstream fraud signals. Static rules are the start of the defense, not the end of it.

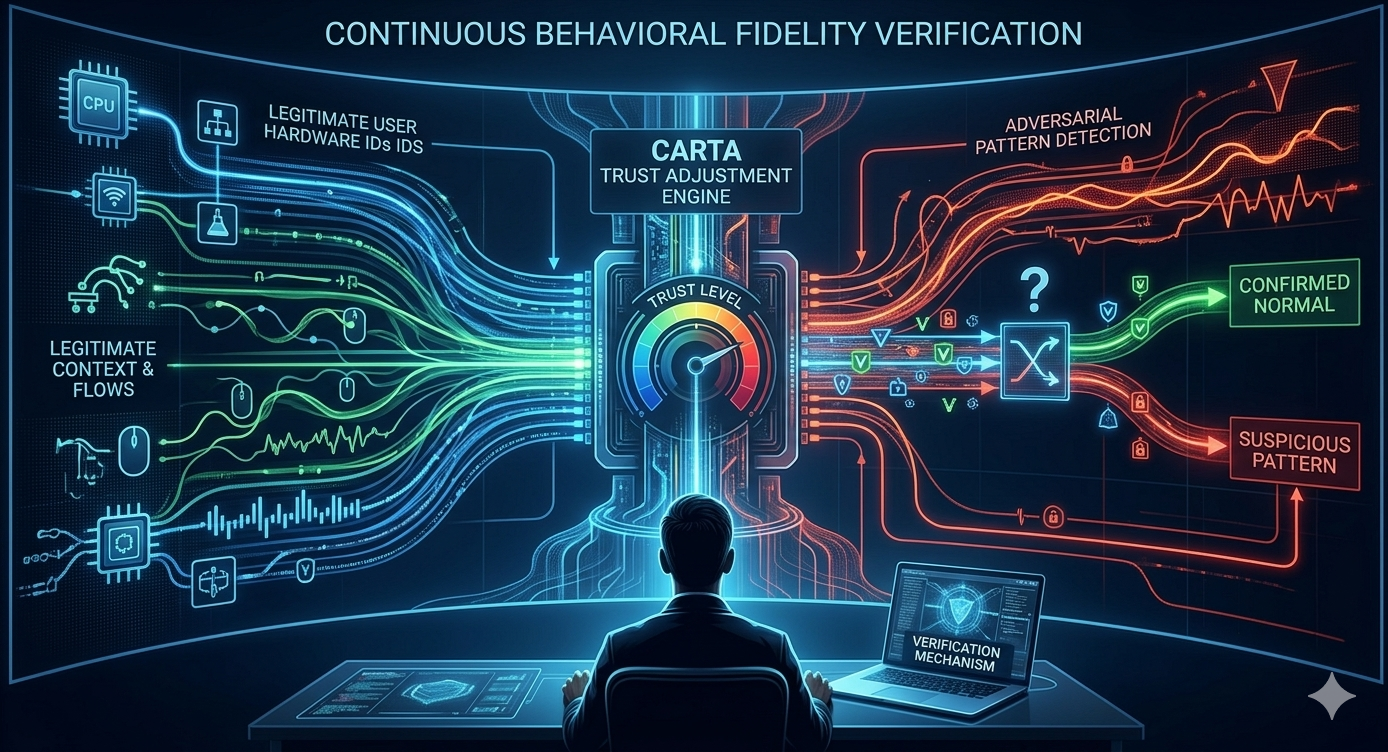

Continuous Monitoring and Continuous Adaptive Risk and Trust Assessment

This is where continuous monitoring and CARTA become the heart of a more modern A10 defense. CrossClassify's CARTA material describes a model where trust is not fixed at login but continuously reassessed based on behavior, device context, location, and risk signals throughout the session. That matters for A10 because many exceptional condition attacks are not obvious at the first request. They emerge as a pattern of retries, parameter anomalies, unusual navigation, suspicious privilege use, impossible travel, session drift, and repeated attempts to reach a sensitive operation after intermediate failures.

In a rule only model, the application may deny one broken request and move on. In a CARTA model, the same request becomes part of a trust story: who sent it, from what device, after what prior actions, in what sequence, with what behavioral deviations, and whether risk should now rise enough to slow, step up, lock, or isolate the session. CrossClassify's own material explicitly positions CARTA as a force multiplier that complements zero trust and traditional controls by adjusting trust in real time rather than only making one binary decision at the edge. For A10, that means exceptional conditions stop being treated only as technical faults and start being treated as security and fraud signals with adaptive response.

Continuous Monitoring of Device Fingerprinting and Behavioral Biometrics

Device fingerprinting should sit at the center of this protection model because exceptional condition abuse is often repetitive even when the attacker rotates visible identifiers. CrossClassify's device fingerprinting pages describe building a persistent device identity from multiple hardware and software attributes and using that identity for continuous monitoring across sessions, even when IPs, browsers, or cookies change. That is highly relevant to A10 because one of the biggest blind spots in exception handling is treating every failed or abnormal event as isolated. When the same underlying device is tied to repeated missing parameter attacks, repeated timeout sequences, suspicious retries, or coordinated multi account failures, the exceptional condition stops looking random and starts looking operationally malicious.

Behavioral biometrics adds the second half of the answer. CrossClassify's behavioral biometrics material explains that continuous authentication can track keystroke cadence, pointer movement, scroll rhythm, touch interaction, dwell time, field transitions, and navigation rhythm throughout the session. For A10, that means the platform can separate genuine user confusion or accidental retries from scripted abuse, emulator behavior, replayed cadence, or abrupt shifts in intent after a failure. In practical terms, behavioral monitoring turns "the user hit an error" into a richer question: did a trusted human hit a normal error, or did an attacker intentionally generate abnormal states to learn, bypass, or overload the application?

The strongest version of this approach is the combination of both. Device intelligence gives continuity across sessions and identities, while behavioral biometrics gives continuity inside the session itself. CrossClassify's own materials repeatedly frame that combined model as better than isolated controls because device fingerprinting plus behavior plus adaptive risk scoring improve accuracy, reduce false positives, and enable lower friction responses. That is exactly the sort of layered protection A10 needs, because exceptional condition abuse is rarely just a one request anomaly. It is usually a sequence, a pattern, and a signal of deeper abuse.

Necessity of Continuous Monitoring of Device Fingerprinting and Behavioral Biometrics

The digital world is full of legitimate errors, legitimate retries, and legitimate user confusion, which is why A10 is so difficult to defend with static logic alone. Security teams cannot block every abnormal condition without breaking real workflows, but they also cannot trust that every abnormal condition is harmless. That is why continuous monitoring is not a luxury layer for mature teams, it is the practical way to distinguish normal friction from adversarial behavior without drowning in either false positives or missed attacks. Zero Trust Architecture already says trust should never be assumed and must be continually verified, and CARTA extends that principle into active, context aware trust adjustment throughout the session.

Device fingerprinting is necessary because attackers reset surface level identifiers constantly. Behavioral biometrics is necessary because attackers can sometimes spoof or rotate infrastructure but still struggle to reproduce stable human interaction patterns over time. And continuous monitoring is necessary because the risk signal may only become obvious after several small abnormal events are stitched together. In the A10 world, that stitching is often the difference between "an application error occurred" and "a fraud campaign is testing your control boundaries."

This is also where the security value becomes a business value. Application owners need protection that improves resilience, keeps trust decisions accurate after login, and reduces costly manual review without allowing bots, fraud farms, or compromised accounts to explore exceptional paths freely. CrossClassify's positioning across its platform, device fingerprinting solution, behavioral biometrics solution, and its CARTA content all point toward that same model: layered intelligence that keeps watching when static controls have already done what they can. For A10, that is not embellishment. It is the natural next step after the rule checklist.

Conclusion

OWASP A10 Mishandling of Exceptional Conditions is one of the most important additions in the 2025 Top 10 because it forces teams to take abnormal states seriously as security states. The category makes clear that unsafe error handling, fail open logic, state corruption, resource exhaustion, and silent exception swallowing are not "developer cleanup items," they are real routes to data exposure, fraud, denial of service, and broken trust. Traditional controls still matter, and every organization should adopt the baseline OWASP guidance around local handling, global backstops, fail closed logic, rollback, limits, validation, logging, and testing. But the gap that remains after those controls is the live question of whether repeated abnormal behavior belongs to a human user, a bot, a compromised session, or a coordinated fraud actor.

That is why the strongest strategic reading of A10 is not just "handle errors better," but "combine disciplined failure design with zero trust, CARTA, device fingerprinting, and behavioral biometrics so the application can continue making safe trust decisions after reality stops following the happy path." For deeper internal linking and product alignment, the most relevant CrossClassify pages are Zero Trust Architecture and Modern AI Cybersecurity, Continuous Adaptive Risk and Trust Assessment, Behavioral Biometrics, and Device Fingerprinting.

See How CrossClassify Uses Behavioral Biometrics to Detect Fraud

Analyze real user behavior patterns continuously to uncover suspicious sessions with less friction

Explore CrossClassify today

Detect and prevent fraud in real time

Protect your accounts with AI-driven security

Try CrossClassify for FREE—3 months

Share in

Related articles

Frequently asked questions

Let's Get Started

Discover how to secure your app against fraud using CrossClassify

No credit card required