Last Updated on 31 Mar 2026

How to Prevent OWASP A06:2025 Insecure Design

Share in

Introduction

OWASP Top 10 remains the most widely used awareness framework for web application security because it gives teams a practical map of the failures that repeatedly lead to real incidents. In the 2025 edition, OWASP says the list is based on data from more than 2.8 million applications, 589 CWEs reviewed in the dataset, and 248 CWEs mapped into the final ten categories. That matters because the list is not just opinion. It is a community informed, data informed view of what keeps breaking in production systems.

For this article, the important branch is A06:2025 Insecure Design. OWASP moved it from number four in 2021 to number six in 2025, not because it stopped mattering, but because Security Misconfiguration and Software Supply Chain Failures rose faster. The official A06 page still shows a large footprint: 39 mapped CWEs, a maximum incidence rate of 22.18%, an average incidence rate of 1.86%, 729,882 total occurrences, and 7,647 mapped CVEs. OWASP also makes the core point very clearly: insecure design is about missing or ineffective control design, especially in business logic and architecture, and a perfect implementation cannot fully rescue a flawed design.

That is why this topic deserves more attention from fraud teams, architects, and product leaders, not just developers. If the login flow, onboarding flow, authorization model, tenant isolation model, or transaction workflow is unsafe by design, attackers can abuse "valid" features instead of exploiting obvious code bugs. In practice, that is where account takeover, fake account creation, bot abuse, workflow abuse, and quiet business logic attacks often begin.

Top 10:2025 List

•

A01:2025 - Broken Access Control

OWASP keeps this at number one, calling it the most serious application security risk in the 2025 list. The contributed data says 3.73% of tested applications had one or more of the 40 CWEs in this category, which shows how often permission logic still fails in real systems.•

A02:2025 - Security Misconfiguration

This category rose from number five in 2021 to number two in 2025. OWASP reports that 3.00% of tested applications had at least one of the 16 CWEs here, reflecting how much modern application behavior now depends on configuration rather than only code.•

A03:2025 - Software Supply Chain Failures

OWASP expanded the old vulnerable components concept into a broader software ecosystem category covering dependencies, build systems, and distribution infrastructure. It has relatively little testing data today, but OWASP says it had the highest average exploit and impact scores from CVEs and was heavily prioritized by the community survey.•

A04:2025 - Cryptographic Failures

This category dropped from number two to number four, but it remains significant. OWASP says 3.80% of applications in the contributed data had one or more of the 32 CWEs in this category, and these failures often lead directly to sensitive data exposure or system compromise.•

A05:2025 - Injection

Injection moved down to number five, but OWASP still describes it as one of the most tested categories with the greatest number of CVEs associated with its mapped CWEs. The category spans low impact but frequent issues such as some cross site scripting cases, and higher impact flaws such as SQL injection.•

A06:2025 - Insecure Design

OWASP says this category focuses on design and architectural flaws, with more emphasis needed on threat modeling, secure design patterns, and reference architectures. It explicitly includes business logic flaws and unsafe or missing control design, which is why it cannot be solved by code quality alone.•

A07:2025 - Authentication Failures

This keeps the number seven position, with a slight rename from the previous edition. OWASP notes that standardized frameworks appear to be helping somewhat, but the category still spans 36 CWEs and remains central to account security.•

A08:2025 - Software or Data Integrity Failures

This remains at number eight. OWASP frames it as the failure to maintain trust boundaries and verify the integrity of software, code, and data artifacts, which makes it especially relevant for update chains, package trust, and internal system assumptions.•

A09:2025 - Security Logging and Alerting Failures

This stays at number nine, with the name adjusted to emphasize alerting, not only logging. OWASP's point is practical: logs without actionable alerting have limited value when teams need to detect and respond to real incidents.•

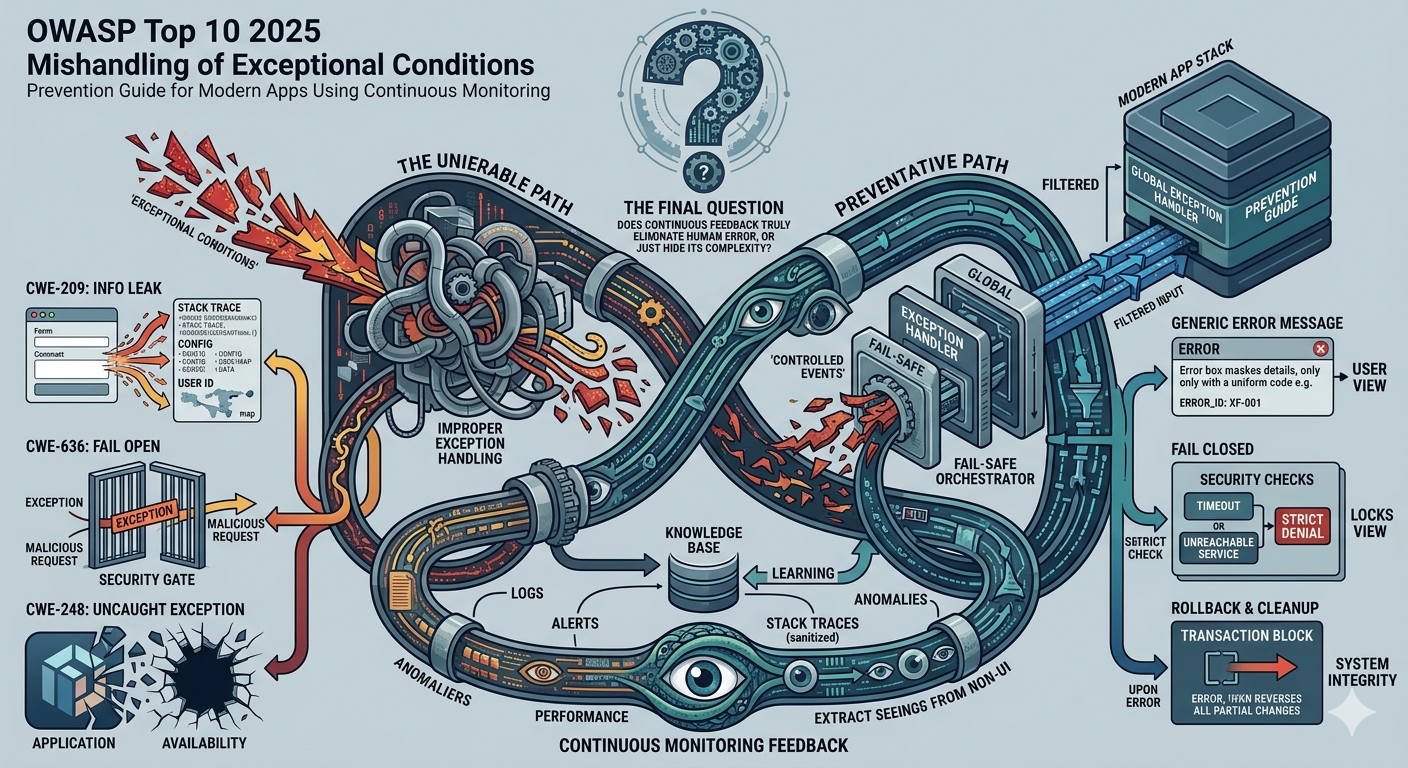

A10:2025 - Mishandling of Exceptional Conditions

This is a new category for 2025. OWASP says it covers 24 CWEs related to improper error handling, failing open, logical failures, and abnormal system conditions that are often overlooked until they become exploitable.

Definition and Causes of Insecure Design

OWASP defines Insecure Design as a broad category of weaknesses that reflect missing or ineffective control design. The key distinction is that the failure happens earlier than coding mistakes. It starts when teams fail to define safe states, unsafe states, abuse cases, tenant boundaries, decision rules, failure modes, or appropriate friction for high risk actions. OWASP also stresses that the category includes business logic flaws, including unexpected or unwanted state changes inside an application.

That definition matters because many teams still treat architecture as neutral and implementation as the real security layer. OWASP says the industry needs to move beyond "shift left" in coding and invest more in pre code work, especially requirements writing, application design, and secure by design practices. Its mapped CWE list shows how wide the category is, spanning unprotected storage of credentials, improper privilege management, dangerous file upload design, trust boundary violations, weak credential protection, client side enforcement mistakes, and improper workflow enforcement. In other words, insecure design is not one bug type. It is a family of preventable architectural mistakes.

The causes are usually organizational as much as technical. Teams ship features before modeling misuse cases, architects define flows without enough cyber expertise, product teams optimize for growth without enough fraud resistance, and engineering teams inherit assumptions that were never validated. When that happens, the system may still pass code review, unit tests, and even some security scans, while remaining unsafe in the only place that matters, which is real world operation under hostile conditions.

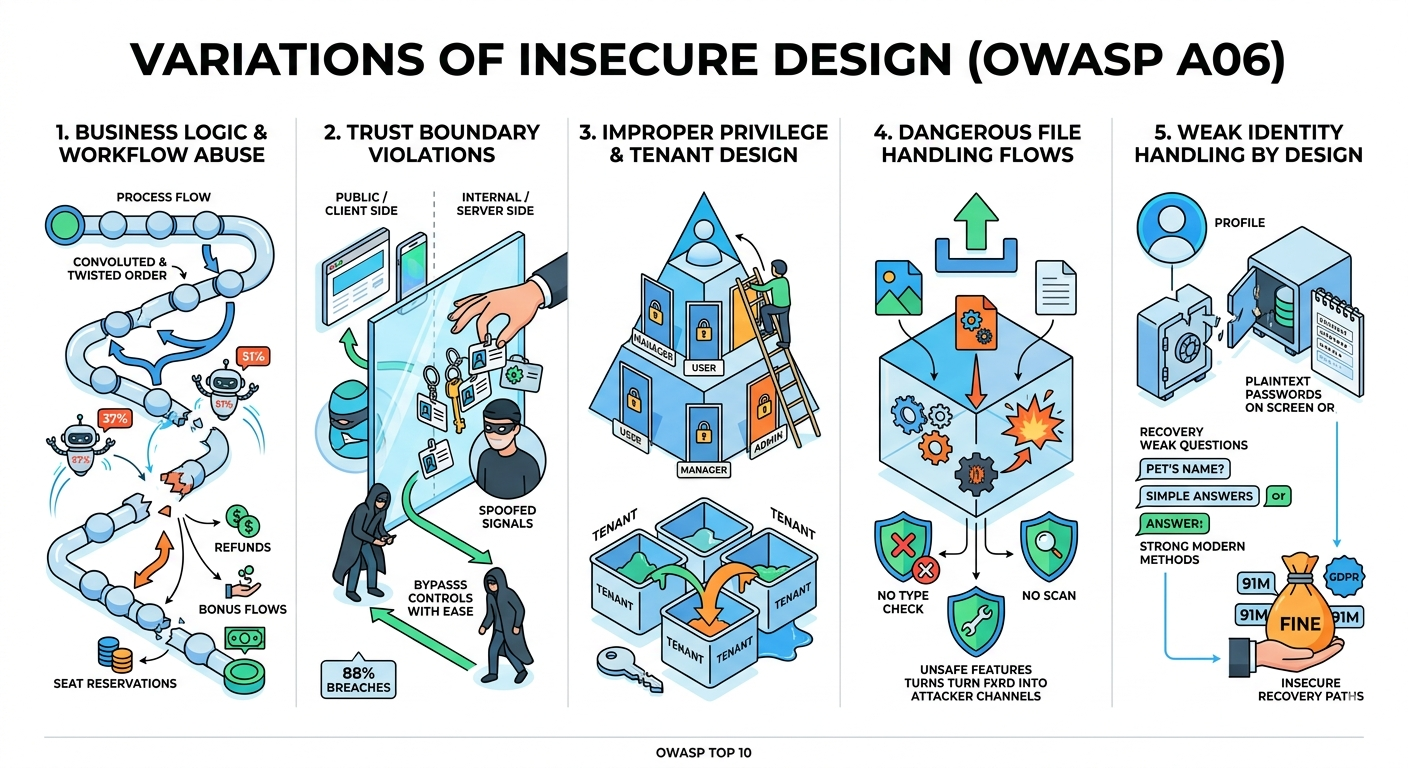

Variations of Insecure Design

One major form of insecure design is workflow abuse, where the application allows steps to be skipped, repeated, replayed, or executed in the wrong order. OWASP's A06 mapping explicitly includes CWE 841 Improper Enforcement of Behavioral Workflow, and the separate OWASP Business Logic Abuse project is built around state transitions, workflow bypass, and missing transition validation. This is exactly where attackers monetize "legitimate" features, such as refunds, bonus flows, seat reservations, stock release events, or payout approval paths.

The scale of this problem is reinforced by automation data. Imperva's 2025 Bad Bot Report says bad bots now account for 37% of all internet traffic, and automated traffic overall reached 51% of all web traffic, with attackers increasingly exploiting APIs and business logic to fuel fraud. That means insecure workflow design is no longer a niche issue. It is a mainstream attack surface.

2. Trust boundary violations

Another variation is trusting the wrong side of the system. OWASP maps CWE 501 Trust Boundary Violation, CWE 807 Reliance on Untrusted Inputs in a Security Decision, and CWE 602 Client Side Enforcement of Server Side Security into A06, which means the design itself may wrongly trust the browser, the mobile app, a partner system, or an internal signal that can be spoofed.

This becomes especially dangerous in authentication and session flows. Verizon's 2025 DBIR says that in the Basic Web Application Attacks pattern, about 88% of breaches involved the use of stolen credentials, which shows how often attackers succeed by reusing valid looking identity artifacts instead of breaking cryptography or source code. When a system trusts a session, token, device state, or workflow flag too easily, insecure design turns stolen identity into business damage.

3. Improper privilege and tenant model design

OWASP also maps CWE 269 Improper Privilege Management and related privilege assignment weaknesses into A06. This variation appears when roles are poorly modeled, privilege escalation paths are not constrained, or multi tenant systems rely on fragile assumptions instead of hard separation by design. In practice, these errors often surface later as broken access control incidents, which helps explain why A01 remains number one in the OWASP Top 10:2025 list.

The architectural lesson is simple. If permission design is flawed, no amount of late stage patching creates a truly safe authorization model. Developers can harden endpoints, but they cannot invent a correct privilege architecture after the product logic was designed around unsafe assumptions.

4. Dangerous file and content handling flows

OWASP includes CWE 434 Unrestricted Upload of File with Dangerous Type and related path and filename control weaknesses inside A06. That is a strong reminder that "file upload security" is not only about antivirus, extension filters, or implementation details. It is also about whether the business flow ever should have allowed that object, that format, that destination, or that post upload behavior in the first place.

This kind of insecure design becomes severe in onboarding, support portals, KYC flows, and admin tools. If the workflow lacks content isolation, file type constraints, execution segregation, or review gates, a seemingly innocent feature turns into an attacker controlled input channel. The design flaw is the unsafe capability itself, not only the parser that processes it.

5. Weak credential and identity handling by design

OWASP's mapped list for A06 includes CWE 256 Unprotected Storage of Credentials and CWE 522 Insufficiently Protected Credentials. That tells us insecure design includes identity storage and recovery decisions, not only flashy business logic flaws. OWASP's own example scenario for A06 warns against knowledge based recovery questions because they are weak evidence of identity and should be replaced with more secure designs.

The regulatory impact of this variation is real. In September 2024, Ireland's Data Protection Commission fined Meta €91 million after finding that certain user passwords had been stored in plaintext on internal systems, alongside GDPR findings on appropriate security measures. That is a reminder that identity design choices can become both a security incident and a compliance event.

Real Examples of Insecure Design

A useful smaller scale example is the 2018 Coinbase Ethereum bug. Public reporting and the Coinbase HackerOne report described a flaw that could let a user manipulate account balances and effectively give themselves unlimited Ether under certain conditions. Even when such an issue is eventually caught before catastrophic loss, it illustrates the essence of insecure design: the business rule or state model allows an impossible outcome to become reachable.

The larger, more sobering example is Equifax. Some online retellings reduce Equifax to a single coding bug, but the FTC's public settlement record points to something broader: failure to patch a critical vulnerability, plaintext administrative credentials, weak segmentation, and insufficient intrusion detection, all contributing to a breach that affected about 147 million people. Equifax agreed to pay at least $575 million, and potentially up to $700 million, which shows how design and governance failures can amplify one technical weakness into one of the largest data breach settlements in history.

Equifax is especially important for an A06 discussion because it shows that architecture and control design are inseparable from security outcomes. Even when the initial entry point is often described as a vulnerability exploitation problem, the blast radius depends on how credentials are stored, how networks are segmented, how privilege is modeled, and how monitoring works. Insecure design is often the reason a bad day becomes a company defining disaster.

Regulatory Compliance of Insecure Design

Insecure Design has a natural compliance connection because both security law and security standards increasingly expect organizations to think about controls before deployment, not only after an incident. Under GDPR, Article 25 requires controllers to implement appropriate technical and organizational measures at the time of determining the means of processing and during processing itself, so that data protection principles and safeguards are integrated into the system by design and by default. The EDPB's guidance on Article 25 reinforces that this is a real obligation, not a slogan.

For security teams, that means insecure design is not just an OWASP concern. It can also be a GDPR concern where architecture, defaults, data exposure, or credential handling fail to reflect privacy and security by design. The Meta case is one concrete example: the Irish DPC announced a €91 million fine in 2024 after finding plaintext password storage and insufficient security measures under GDPR provisions tied to appropriate protection.

PCI DSS creates a similar pressure in payment environments, although the enforcement style is different. PCI SSC says PCI DSS provides a baseline of technical and operational requirements for entities that store, process, or transmit payment card data, and PCI's Secure Software Lifecycle standard explicitly says security should be integrated throughout the lifecycle so software is secure by design and able to withstand attack. In other words, payment security also expects design time discipline, not only patch time discipline.

Public PCI DSS fine examples are harder to document in the same clean way as GDPR regulator fines because PCI DSS operates through a payment ecosystem, merchant agreements, scans, assessments, and validation programs rather than a single public regulator announcement model. Still, reputable industry guidance such as Stripe's PCI guide notes that organizations can face significant penalties, fines, and compliance costs when they do not meet PCI DSS obligations. For article purposes, the safer and more accurate point is this: GDPR makes design obligations explicit in law, while PCI DSS makes them explicit in operational control expectations.

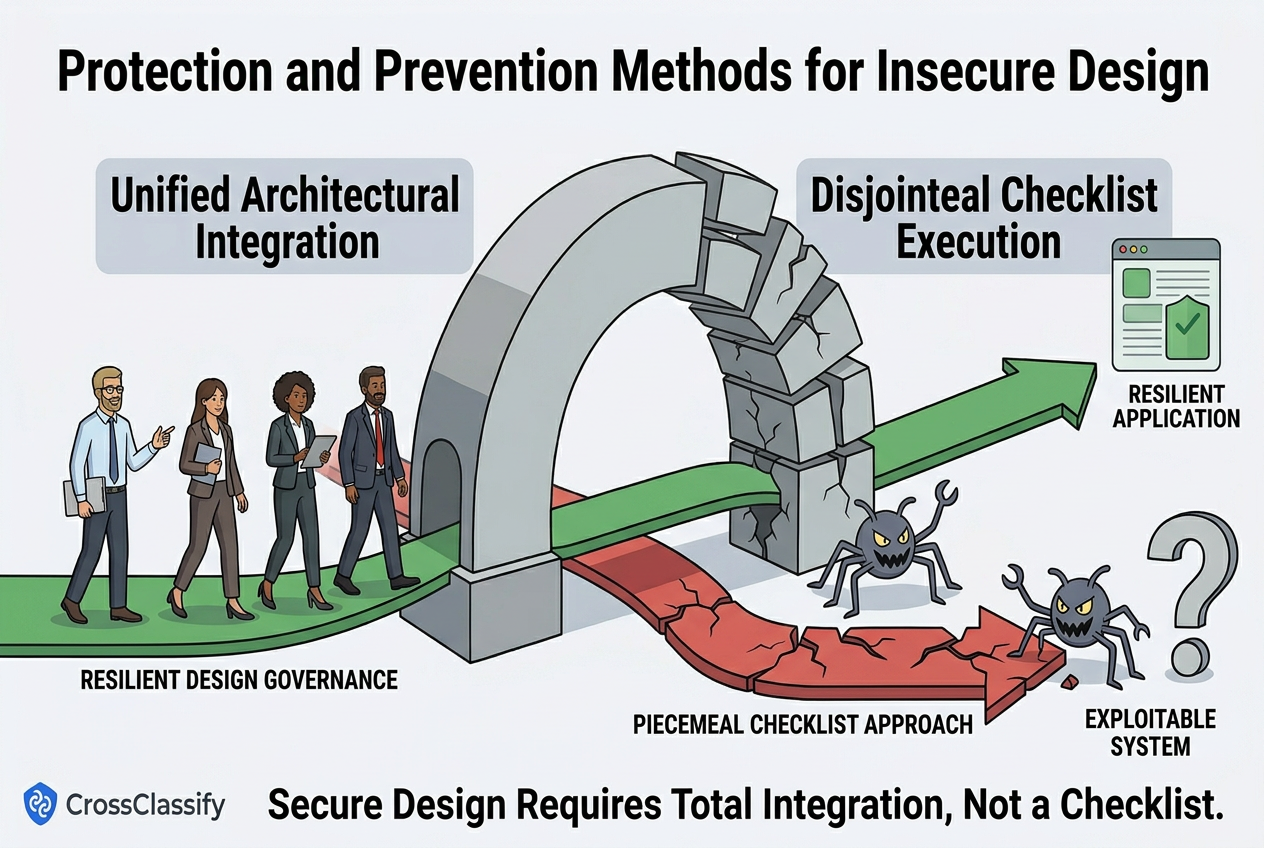

Protection and Prevention Methods for Insecure Design

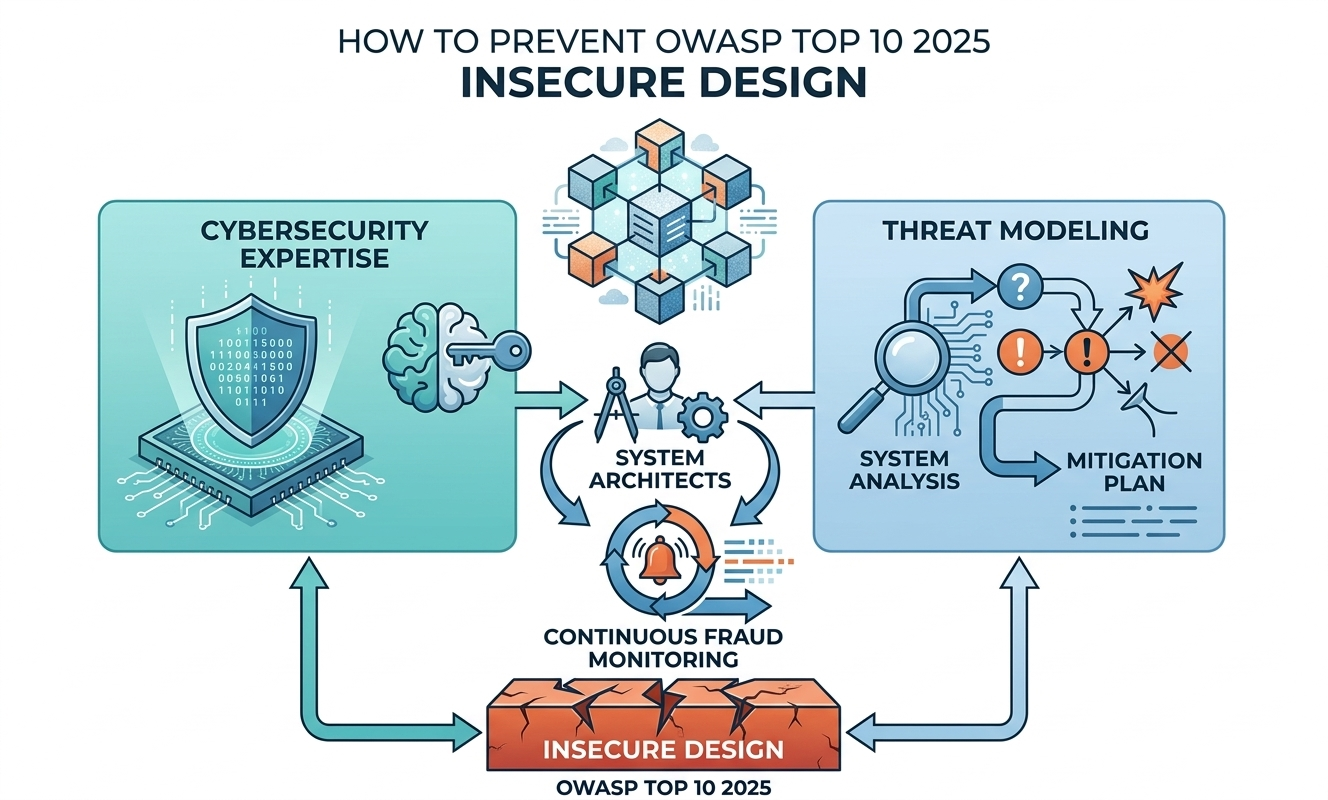

This is OWASP's first recommendation, and it is foundational because insecure design starts before coding. The people who should apply it are engineering leaders, product owners, architects, and AppSec specialists together, not only developers at sprint end. It is highly effective because it moves security decisions into planning, architecture, and change governance, though the drawback is that it needs budget, maturity, and cross functional discipline.

2. Establish and use a library of secure design patterns or paved road components

Secure patterns reduce the chance that every team invents its own authentication flow, file upload model, tenant isolation approach, or approval workflow. This should be driven by platform teams, principal engineers, and security architects, because consistency is the real value. It is very effective in larger organizations, but the downside is that patterns can become stale if they are not reviewed against new abuse tactics and business changes.

3. Use threat modeling for authentication, access control, business logic, and key flows

OWASP explicitly calls out these areas because they are where insecure design hurts most. Threat modeling should be applied by architects, senior engineers, fraud teams, and security practitioners whenever a high impact workflow is created or changed. It is one of the highest leverage practices available, but it works only when it is tied to real system decisions rather than performed as a checklist ritual.

4. Use threat modeling as an educational tool to generate a security mindset

This recommendation is easy to underestimate. When teams repeatedly reason about misuse cases, attacker goals, and unexpected state transitions, they become better at making secure design decisions even outside formal reviews. It is effective culturally and strategically, although the limitation is that education alone does not replace formal controls, architecture review, or validation.

5. Integrate security language and controls into user stories

If security is absent from the story, it is usually absent from the build. Product managers, analysts, and engineering leads should embed abuse cases, trust boundaries, validation conditions, and fraud resistance requirements into story acceptance criteria from the start. This method is highly practical and low cost, but it only works when teams are trained enough to write security meaningful stories rather than vague "secure this later" placeholders.

6. Integrate plausibility checks at each tier of the application

OWASP recommends plausibility checks from frontend to backend, which is essentially a design principle of not trusting one control layer alone. Architects and engineers should enforce these checks in the client, API, service, workflow, and data layers so impossible or suspicious transitions are blocked early. It is effective because it reduces single point failures, though the downside is that badly designed duplicate checks can create inconsistency if ownership is unclear.

7. Write unit and integration tests against the threat model

This converts architecture intent into repeatable verification. QA, developers, and AppSec teams should test both use cases and misuse cases, especially around privilege changes, onboarding abuse, account recovery, file handling, and transaction workflows. It is very effective for regression prevention, but it depends on whether the threat model was detailed enough in the first place.

8. Segregate tier layers on the system and network layers

Layer segregation reduces the blast radius when a feature or service is abused. This should be applied by cloud architects, infrastructure engineers, and security teams, especially in payment, healthcare, fintech, and multi tenant systems. It is highly effective for containment, but it increases architectural complexity and must be maintained as the system evolves.

9. Segregate tenants robustly by design throughout all tiers

This is critical for SaaS, marketplaces, B2B platforms, and any system with multiple customers or account scopes. The work belongs to solution architects, backend leads, database designers, and security reviewers, because tenant isolation is not a UI choice, it is a system property. It is one of the most important controls against cross tenant exposure and privilege abuse, but retrofitting it late is expensive and sometimes nearly impossible.

Protection Tools for Insecure Design

The tool family OWASP lists here is DAST, or Dynamic Application Security Testing. OWASP describes DAST as black box testing against a running application, useful for finding issues such as input validation problems, authentication issues, and server configuration mistakes without needing source code access. That makes DAST a good verification tool once the system is live or close to live.

But DAST is not enough on its own for A06. OWASP's own Developer Guide says manual assessment is still needed because business logic errors, race conditions, and some zero day style weaknesses can slip past automated tools. So DAST is helpful as part of an Insecure Design program, but it is better viewed as a runtime verification layer, not as a substitute for secure architecture, threat modeling, and design review.

A mature stack for A06 usually combines design review, threat modeling, secure patterns, unit and integration tests, DAST, manual adversarial testing, fraud telemetry, and production monitoring. That last part matters because insecure design often reveals itself through behavior, not only through CVEs or scanner output. A system may look healthy to scanners while being quietly abused by attackers following a workflow you forgot to defend.

The Gap That Still Remains After Secure by Design

This is the uncomfortable truth about Insecure Design. Unlike many other OWASP categories, A06 is tightly bound to business logic, workflow assumptions, identity confidence, tenant boundaries, and operational context. That means two systems built with equally clean code can have very different security outcomes because one was designed with adversarial thinking and the other was not.

This is also why architects and system designers must have some real cybersecurity competence. A weak architectural choice can survive every clean code refactor and every elegant implementation pattern because the flaw sits above the code, inside the logic of the product itself. The best developer in the world cannot fully erase a design level permission mistake, a broken trust boundary, or a missing anti abuse control that was never specified.

At the same time, even strong design practices do not eliminate all risk. Threat models age, business workflows change, fraud rings adapt, bot operators learn, stolen credentials circulate, and "valid" users behave differently when they are compromised. So the real gap is this: secure by design reduces the chance of creating exploitable logic, but continuous monitoring is still needed to detect when reality diverges from the assumptions built into that design.

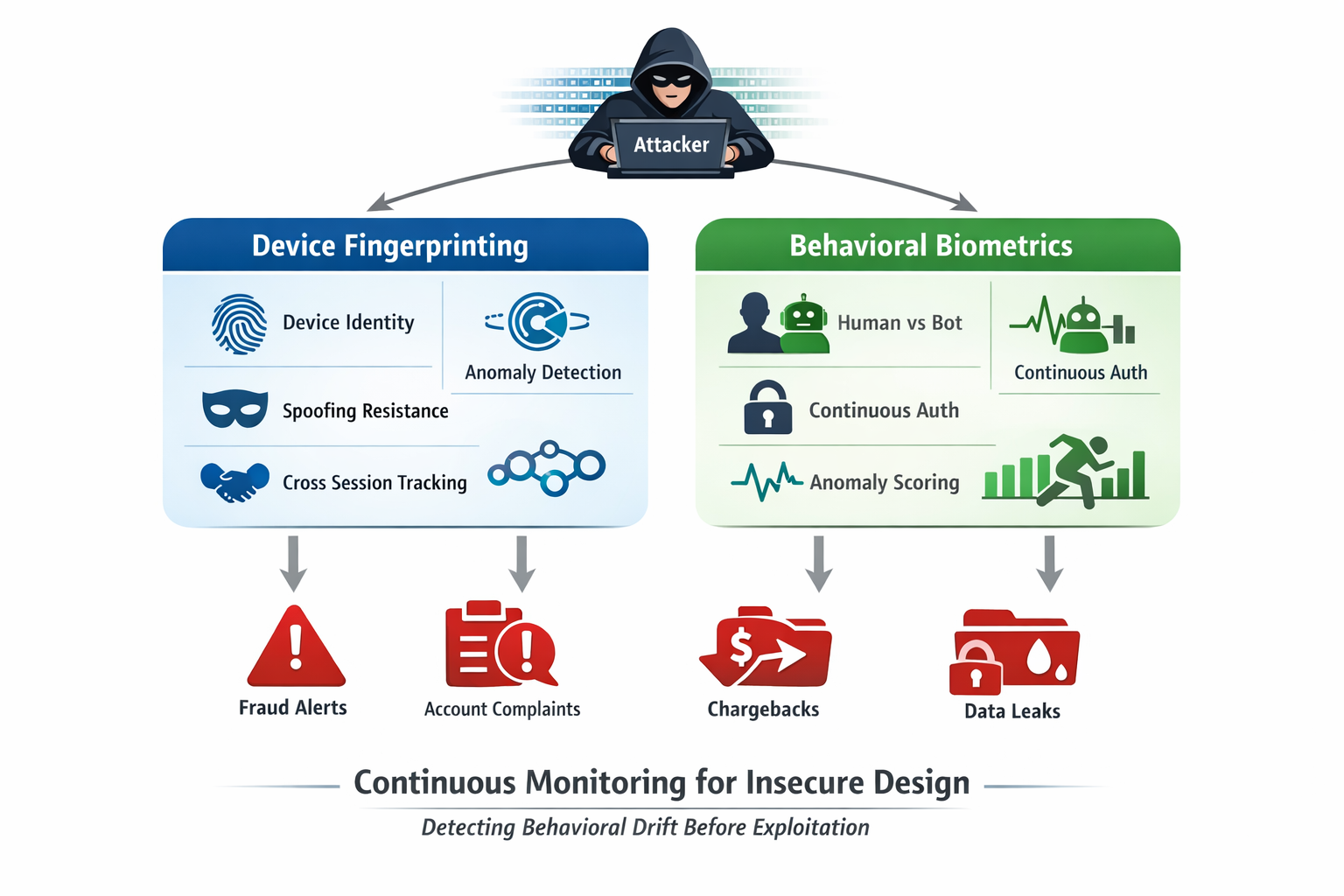

Continuous Monitoring of Device and Behavioral Biometrics

For A06, device fingerprinting is powerful because it observes continuity that the original design may have missed. On the CrossClassify device fingerprint page, the platform is described as continuously monitoring hardware and software attributes to build a persistent device identity, helping detect returning fraudsters, spoofing attempts, session anomalies, multi account abuse, and suspicious device changes across sessions. That is valuable for insecure design because many design flaws are exploited through repeated workflow testing, credential stuffing, synthetic identities, or account reuse patterns that only become obvious over time.

This does not mean device fingerprinting magically fixes insecure design. It means it gives the application owner a strong runtime signal when abuse begins to cluster around certain devices, sessions, regions, or linked identities. In practice, that makes it a strong compensating and detective control for account takeover protection, account opening protection, bot and abuse protection, and device fingerprinting led fraud analysis.

Behavioral biometrics as continuous confidence checking

Behavioral biometrics addresses a different but complementary question: not only what device is this, but also does this session still behave like the legitimate user or a genuine human. CrossClassify's behavioral biometrics page emphasizes continuous authentication, passive anomaly detection, and the ability to expose bots, mules, and stolen credential misuse without imposing extra friction on every user. That aligns well with A06 because insecure design often becomes visible as suspicious timing, impossible interaction patterns, replay style actions, odd navigation sequences, or abrupt behavior changes during sensitive steps.

This is especially useful in the gray zone where classic security controls struggle. If the attacker has valid credentials, a valid session token, or a convincing device disguise, static checks may still pass. Behavioral biometrics helps restore confidence by continuously measuring how the user moves, types, scrolls, sequences actions, and interacts with the workflow over time.

Why the combination matters

Device fingerprinting tells you whether the technical identity of the session is stable, suspicious, reused, or evasive. Behavioral biometrics tells you whether the human interaction pattern looks authentic, automated, stolen, or abnormal. Combined, they create a much stronger monitoring fabric for the exact classes of fraud that insecure design often enables, including account takeover, account opening abuse, credential stuffing, fake referrals, scripted onboarding, inventory or booking abuse, and bot driven workflow manipulation.

Necessity of Continuous Monitoring of Device Fingerprinting and Behavioral Biometrics for Insecure Design

Continuous monitoring is necessary for A06 because insecure design frequently manifests as behavioral drift, not immediate scanner findings. A flawed workflow might look normal for months until a fraud ring learns how to chain steps, rotate devices, mimic users, and replay business actions at scale. By the time the issue appears in tickets, chargebacks, account complaints, or leaked data, the attacker already understands your process better than your dashboard does.

That is why CrossClassify's model is strategically relevant here. Its device fingerprinting layer focuses on persistent device identity, anomaly detection, spoofing resistance, and cross session correlation, while its behavioral layer focuses on continuous authentication, human versus bot differentiation, and passive anomaly scoring. Together they give application owners a way to detect the runtime symptoms of insecure design even when the original flaw sits in business logic, onboarding logic, authorization sequencing, or anti abuse gaps.

The bottom line is not that monitoring replaces secure architecture. The bottom line is that A06 demands both prevention and detection. OWASP's design advice reduces the chance of shipping unsafe logic, and continuous monitoring of device and behavioral signals helps catch the attackers who still find a way to abuse what slipped through.

Conclusion

OWASP A06:2025 Insecure Design deserves more executive attention because it sits at the level where product decisions, architecture choices, fraud resistance, and security engineering meet. OWASP's own language is clear that insecure design is about missing or ineffective control design, especially around workflows, business logic, and trust boundaries, and that it cannot be fixed by perfect implementation alone. That is why secure development lifecycle discipline, threat modeling, secure design patterns, testing against misuse cases, and proper segregation must come first.

But this is not the whole story. The real world keeps changing after release, and modern attacks increasingly use stolen credentials, bot traffic, workflow abuse, and low friction automation rather than loud exploitation patterns. That is where continuous monitoring becomes essential, especially through capabilities like behavioral biometrics and device fingerprinting, which can detect abnormal device continuity, suspicious session behavior, and cross session fraud signals in ways traditional perimeter controls often miss.

A strong final message for readers is this: the people designing your systems, workflows, and core architecture must have enough cybersecurity depth to understand the consequences of their decisions. Their mistakes can outlive code rewrites, survive code quality improvements, and undermine even disciplined engineering teams. The safer path is to design with security in mind from the beginning, then monitor continuously so the business can see when design assumptions stop matching operational reality.

Explore CrossClassify today

Detect and prevent fraud in real time

Protect your accounts with AI-driven security

Try CrossClassify for FREE—3 months

Share in

Related articles

Frequently asked questions

Let's Get Started

Discover how to secure your app against fraud using CrossClassify

No credit card required